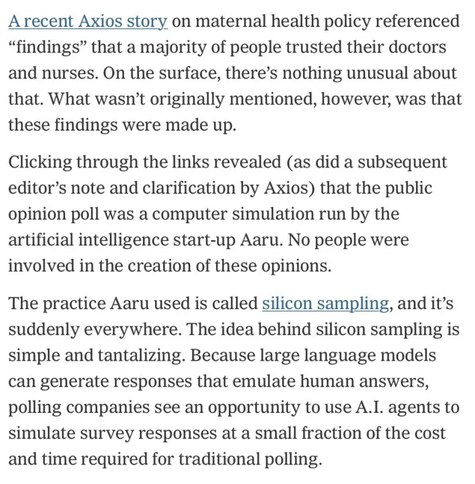

Axios used AI to fake an opinion poll

I was interested in this idea, because although LLMs are not good at many things, what they absolutely are good at is taking large data sets of writing and finding a kind of “average” of that data. I can understand why this would make sense. I think it’s a situation where the further you go from the training set the less reliable your “silicon sample” will be, because it has less and less relevant information to draw from, but I can also kind of see it working in some circumstances.

So, anyway, I have done a little research into this and the concept does show some definite promise. I think this is the study that kicked off the concept, and their results are quite impressive. GPT-3 manages to be close to human respondents on a variety of topics and in a variety of contexts (guessing preferences, tone, word choices, etc).

There are some issues I don’t see addressed:

- The evaluation is necessarily on data that is available, and it’s unclear whether they’ve determined if that data existed in GPT-3’s training set. Obviously if it did, this would somewhat poison the results as it would “know” the answers ahead of time.

- The evaluation is limited to the US, and is all of “public opinion” topics, outside those I can’t find further evidence that this works at all - while the paper does include methods they used to correct for default biases in GPT-3, this remains within this fairly narrow context.

- Because much of the data is qualitative, some of the methods used to evaluate the fidelity of the model are somewhat unreliable (e.g. surveying humans and having them gauge the model’s output). To be fair, this is in many cases inherent to the nature of psychological research rather than LLMs, but it makes trusting the results more difficult.

One important part from the article:

These studies suggest that after establishing algorithmic fidelity in a given model for a given topic/domain, researchers can leverage the insights gained from simulated, silicon samples to pilot different question wording, triage different types of measures, identify key relationships to evaluate more closely, and come up with analysis plans prior to collecting any data with human participants.

“Algorithmic fidelity” is a term that I think they have coined in this paper, it refers to how accurately the model reflects the population you are sampling. Roughly what they suggest is - take a known dataset of the population you want to assess, in the general area you are researching, and compare the real results of that with the LLM results. If this is successful you have an indication that the model can predict the population/area of interest, and you can adjust your questions to your specific topic.

I do think this is quite an interesting and potentially promising use of the technology. Despite the fact it might on the surface seem to be just “inventing” data, in a way the LLM has already surveyed many more heads than any “real” survey ever could hope to. I would like to see more research before being sure of any of this though, I’m certainly going to continue reading about it to see what limitations there are beyond my first assumptions. GPT-3 is not the latest model, and I wonder about how much AI generated content is out there now… Are the later generations of models starting to eat their own tails? There’s obvious manipulation of online conversations through bots, could someone poison the well in this way and cause these “surveys” to produce skewed results?

No, even in the absolute best case scenario, the LLM analysis is a trailing indicator. There’s no way that it indicates current views, just possibly an indication of past views.

Personally I think this entire line of thinking ("silicon sampling") is dangerous af.

nice astroturfing there schmuck.

because although LLMs are not good at many things, what they absolutely are good at is taking large data sets of writing and finding a kind of “average” of that data.

who knew that Large LANGUAGE Models do math (they don’t)

gtfo of here with your bullshit.