Show HN: Hippo, biologically inspired memory for AI agents

Cool project. I like the neuroscience analogy with decay and consolidation.

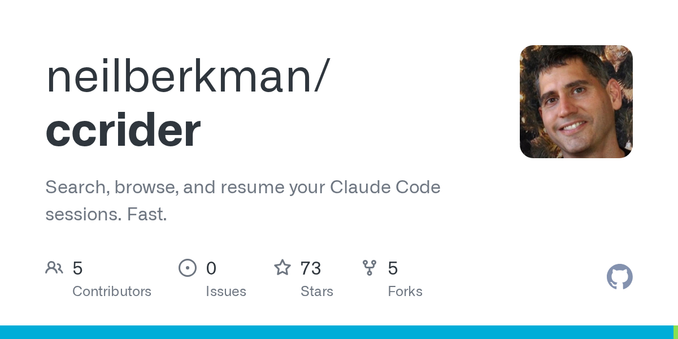

I've been working on a related problem from the other direction: Claude Code and Codex already persist full session transcripts, but there's no good way to search across them. So I built ccrider (https://github.com/neilberkman/ccrider). It indexes existing sessions into SQLite FTS5 and exposes an MCP server so agents can query their own conversation history without a separate memory layer. Basically treating it as a retrieval problem rather than a storage problem.

> Aren't tools like claude already store context by project in file system?

They do, the missing piece is a tool to access them. See comment about my tool that addresses this: https://news.ycombinator.com/item?id=47668270

Cool to see others on this thread.

Here's a post I wrote about how we can start to potentially mimic mechanisms

https://n0tls.com/2026-03-14-musings.html

Would love to compare notes, I'm also looking at linguistic phenomena through an LLM lens

https://n0tls.com/2026-03-19-more-musings.html

Hoping to wrap up some of the kaggle eval work and move back to researching more neuropsych.

HippoRAG: Neurobiologically Inspired Long-Term Memory for Large Language Models

In order to thrive in hostile and ever-changing natural environments, mammalian brains evolved to store large amounts of knowledge about the world and continually integrate new information while avoiding catastrophic forgetting. Despite the impressive accomplishments, large language models (LLMs), even with retrieval-augmented generation (RAG), still struggle to efficiently and effectively integrate a large amount of new experiences after pre-training. In this work, we introduce HippoRAG, a novel retrieval framework inspired by the hippocampal indexing theory of human long-term memory to enable deeper and more efficient knowledge integration over new experiences. HippoRAG synergistically orchestrates LLMs, knowledge graphs, and the Personalized PageRank algorithm to mimic the different roles of neocortex and hippocampus in human memory. We compare HippoRAG with existing RAG methods on multi-hop question answering and show that our method outperforms the state-of-the-art methods remarkably, by up to 20%. Single-step retrieval with HippoRAG achieves comparable or better performance than iterative retrieval like IRCoT while being 10-30 times cheaper and 6-13 times faster, and integrating HippoRAG into IRCoT brings further substantial gains. Finally, we show that our method can tackle new types of scenarios that are out of reach of existing methods. Code and data are available at https://github.com/OSU-NLP-Group/HippoRAG.