1/2

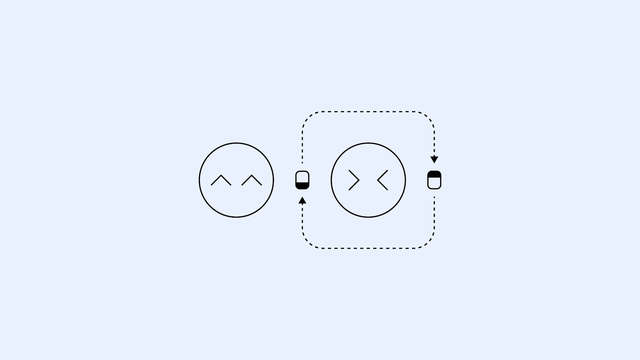

#OpenAI put out a paper last year that didn't get much attention about "personas," inference and output styles that often get adopted by chatbots when they are trying to respond to a user.

#Anthropic released a separate one a few days ago on the same topic. I'll link to that in the next post. https://openai.com/index/emergent-misalignment/