it is possible to mangle stuff using any software, so i'm perhaps giving ABBYY a hard time here. It is a tool and it's up to the user to choose what options to use. When you're scanning literally thousands of documents, you may have considerations for disk space that I do not. If I want a 300MB light pen PDF, I've got the space.

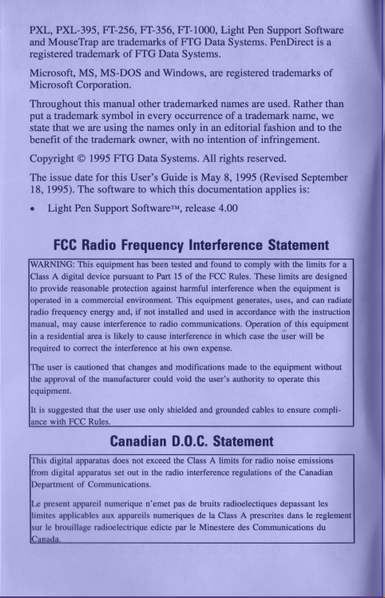

But ... just look at this. Here's a normal scan on the left, and whatever the heck is going on on the right.

I don't want to sound like I am not grateful for these scans - I know it was a lot of time and effort that someone didn't have to do, that I paid nothing for, that lets me peer back into history and do all my research for stupid light pen articles.

But I'm still gonna be a little salty about it