When You Run Out of Types...

@yogthos The inference engine I was working on for my thesis (and never completed), Wildwood, assigned objects to classes automatically based on their features; so that

1. one object could be in many otherwise unrelated classes; and

2. you could define a new class, and it would be automatically populated with every object already known to the system which met its criteria.

I wrote about this idea in my first essay of the #PostScarcitySoftware project.

https://www.journeyman.cc/blog/posts-output/2006-02-20-postscarcity-software/#social-mobility

Post-scarcity Software

For years we've said that our computers were Turing equivalent, equivalent to Turing's machine U. That they could compute any function which could be computed. They aren't, of course, and they can't, for one very important reason. U had infinite store, and our machines don't. We have always been store-poor. We've been mill-poor, too: our processors have been slow, running at hundreds, then a few thousands, of cycles per second. We haven't been able to afford the cycles to do any sophisticated munging of our data. What we stored — in the most store intensive format we had — was what we got, and what we delivered to our users. It was a compromise, but a compromise forced on us by the inadequacy of our machines.The thing is, we've been programming for sixty years now. When I was learning my trade, I worked with a few people who'd worked on Baby — the Manchester Mark One — and even with two people who remembered Turing personally. They were old then, approaching retirement; great software people with great skills to pass on, the last of the first generation programmers. I'm a second generation programmer, and I'm fifty. Most people in software would reckon me too old now to cut code. The people cutting code in the front line now know the name Turing, of course, because they learned about U in their first year classes; but Turing as a person — as someone with a personality, quirks, foibles — is no more real to them than Christopher Columbus or Noah, and, indeed, much less real than Aragorn of the Dunedain.

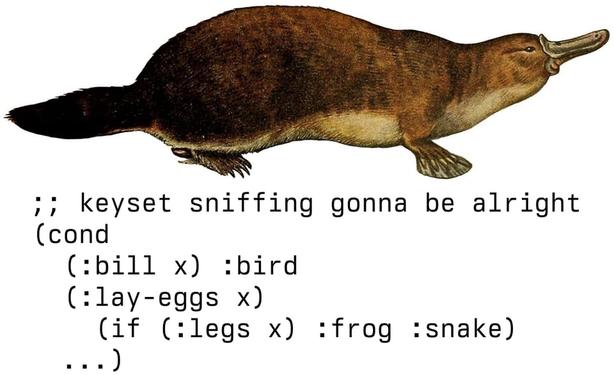

Context dependent types is a really good concept in my opinion. Having a small set of common basic types that are shared across the language is very convenient, but enriching these types within a specific domain can provide a lot of value by adding metadata on top. As a bit of data naturally evolves and acquires new properties over its lifecycle, there needs to be a way to dynamically migrate to whatever structural category actually fits its current shape. This sort of stuff becomes particularly important when dealing with authentication or validation rules where we might want to enrich the data with entirely new attributes.

And I also agree that a lot of the decisions about the way languages work stem from the constraints that were in place in the early days of computing, but are no longer there today. I really like the framing of post scarcity computing.