Event-Driven Architecture Truths #SystemDesign #EDA (And When NOT to Use It)

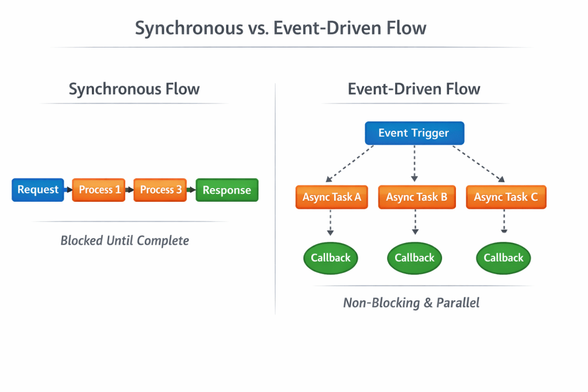

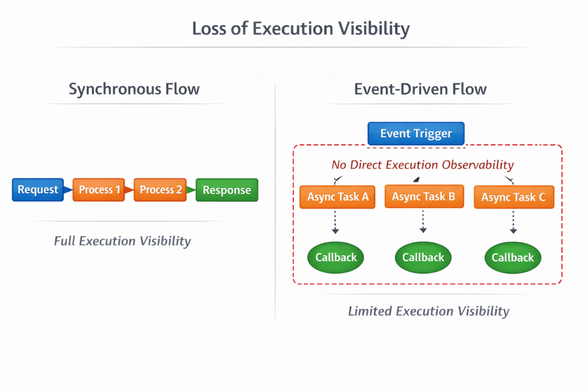

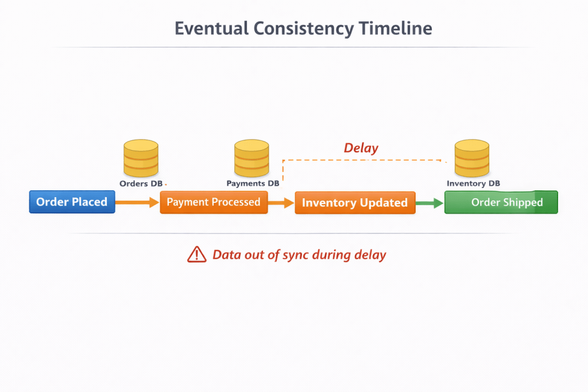

Event-Driven Architecture (EDA) is often promoted as the ultimate solution for scalability, decoupling, and modern system design. But what most engineers don’t realize is that EDA introduces a new class of complexity—hidden in asynchronous flows, eventual consistency, and operational overhead. In this deep technical breakdown, we go beyond the hype and uncover the real trade-offs of event-driven systems. You’ll learn why debugging becomes exponentially harder, how schema evolution can silently break systems, and why most teams underestimate the cognitive and operational load of distributed architectures. We explore real-world challenges like duplicate events, out-of-order processing, and failure handling using retries, dead-letter queues, and idempotency strategies. More importantly, we explain when NOT to use event-driven architecture—something rarely discussed but critical for making the right architectural decisions. This guide also introduces a practical hybrid approach that balances synchronous reliability with asynchronous scalability, along with advanced patterns like orchestration vs choreography. If you’re a software engineer, architect, or engineering leader working with microservices, Kafka, or distributed systems, this is a must-read. Stop blindly adopting trends. Start designing systems your team can actually understand, debug, and evolve. #EventDrivenArchitecture #SystemDesign #Microservices #DistributedSystems #SoftwareArchitecture #Kafka #Scalability #BackendEngineering #TechLeadership #CloudArchitecture@atozofsoftwareengineering.blog

Interesting read, though I have a different take on the 'accuracy' and complexity trade-offs. To me, the core purpose of EDA isn't to sacrifice precision for scalability, but to use an event-driven algorithm to guarantee the exact data.

Look at the banking sector: they run on EDA because a static balance in a database is just a 'snapshot' that can be corrupted. The only absolute truth is the stream of immutable events.

This is where technical rigor becomes the real game-changer:

First, a unique ID for every event: This is non-negotiable. It’s the DNA of your system. Without a unique Correlation ID and an Idempotency Key, you can’t track a flow or prevent double-processing. It’s what transforms a chaotic stream of messages into a reliable audit trail.

Second, Schema Registry: We have the info! A registry allows you to know exactly who consumes what and in which format before any deployment. By enforcing contracts, you can test compatibility for a V2 in your CI/CD pipeline. If it’s going to break, you know it before it hits production.

Third, DLQs (Dead Letter Queues): This isn't just 'operational overhead'; it’s your insurance policy. If a service fails, the event isn't lost in the void. It’s parked, analyzed, and kept ready for remediation. It ensures that no 'fact' is ever dropped, maintaining the integrity of the whole chain.

Fourth, Replayability: This is the ultimate safety net. It allows you to recalculate the real data at any point in time. If a bug is discovered, you don't just patch the state; you re-run the events through the fixed algorithm to restore the exact truth.

The final touch:

I fully agree that you must strictly control the pros and cons of an event-driven ecosystem. The cost of this complexity and the required rigor should never jeopardize the service or the overall stability of the architecture. But when properly engineered, EDA isn't a source of 'vagueness'—it's the most robust way to achieve mathematical traceability.

(Note: I used AI to help with the translation into English)

Where I’d push back is this: what you’re describing is **Event-Driven Architecture done *perfectly***. Most teams never get there.

Banking systems *do* treat events as the source of truth—but they also invest heavily in disciplines like **event sourcing**, strict ordering guarantees, and years of operational maturity. That’s not “EDA by default”—that’s **EDA with extreme rigor**.

Your points are spot on:

* Unique IDs + idempotency → non-negotiable

* Schema registry → critical for evolution

* DLQs → essential safety net

* Replayability → huge advantage

But here’s the uncomfortable reality:

👉 These aren’t “features” of EDA

👉 They are **costs you must pay to make EDA safe**

And most systems:

* Skip proper idempotency → get duplicates

* Treat schemas loosely → break consumers

* Ignore DLQs → silently lose events

* Never build replay pipelines → lose recoverability

So instead of “mathematical traceability,” they get **distributed ambiguity**.

The deeper point is this:

**EDA doesn’t automatically give you correctness—

it gives you the *ability* to build correctness… at a high cost.**

That’s why I argue it shouldn’t be the default choice.

Use it where:

* Auditability is critical (finance, ledgers)

* Temporal history matters

* Scale demands async decoupling

Avoid it where:

* Strong consistency is required *immediately*

* The team can’t support the operational rigor

* Simpler models solve the problem

You’re describing the *ceiling* of EDA.

Most teams are operating far below the *floor* needed to make it safe.

And that gap—that’s where things break.

We actually agree: you only go for EDA when there is no other way to handle the scale or the risk.

Uber, Stripe, or Amazon didn't choose EDA because it’s a 'feature,' but because their business model makes it a necessity (first Besos Rules :P). For them, the gain (no system failure, no lost transaction) far outweighs the operational cost. Netflix is the same: the telemetry they get for their product strategy is worth the investment. It’s always Critical Risk vs. Business Gain. If you have the budget for the 'ceiling', it’s the best tool. Otherwise, it’s just over-engineering.

About "The New Engineer" in the article, the misunderstanding usually comes from managers who measure productivity in lines of code (taylorism). A real engineer wants to code right, not just code.

Sometimes, writing 3 lines requires 6 hours of analysis to ensure the ecosystem stays stable (dependency). In an EDA world, this is mandatory. You aren't just a coder! you are a guardian of the system. Good engineers have always wanted to work this way.

Last thing, I’m not a big fan of AI-only responses. I’d rather have a real talk between engineers than a formatted bot reply.

(Note: I used AI to help with the translation into English)