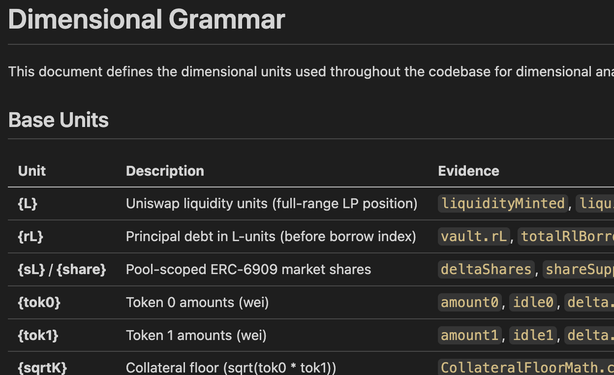

93% recall vs 50% for baseline prompts. Our new dimensional-analysis plugin for Claude Code doesn’t ask the LLM to find bugs. It annotates your codebase with dimensional types, then flags mismatches mechanically. Tested against real audit findings. https://blog.trailofbits.com/2026/03/25/try-our-new-dimensional-analysis-claude-plugin/

@trailofbits you're adding annotations which claim to perform deterministic measurements stochastically, and particularly describe price as a use case. it sounds great for money laundering but highly questionable for "vulnerabilities".