@pervognsen @nick reference on early POWER multi-loads https://bitsavers.org/pdf/ibm/IBM_Journal_of_Research_and_Development/341/ibmrd3401E.pdf pp. 7-10 starting with "The RS/6000 architecture has adopted the following strategy for dealing with misaligned data."

Load-multiple section starts. on p. 9 "Another aspect of including string operations..."

@pervognsen @nick I will say that they are IMO bang on the money here on _all_ counts - calling out that

a) mem copies/string copies etc. are important and usually unaligned

b) Alpha-esque "we give you a way to do SWAR loops for this" only gets you so far,

c) for load/store multiple, that function prologues/epilogues are the key use case

other ISAs have struggled to learn that lesson 30 years later...

> The architecture allows for the partial

completion of an operation and thegeneration of an

alignment-check interrupt when the datacrosses a cache-

line boundary. System softwarecan then complete the

instruction by fixing up the affected registersor memory

locations.

this has EINTR vibes

hmm are there any Unix syscalls that can partially happen and then return EINTR? I guess not... read() and write() can partially complete but then they return a length, and you don't get to know if it was short because of a signal...

so it looks like IBM's string instructions requiring "fixing up registers or memory" is even worse

but my point was more about "it's an edge case we don't want to handle, let's create a new edge case one layer up and let those folks handle it"

@rygorous @pervognsen @nick

Hmm so upon an exception, a MIPS CPU only:

- disables interrupts

- saves PC in EPC

- fills the Cause register

- jumps to a hard-wired address

?

So it doesn't save any of the GPRs for you, and unlike in ARM, there is no separate copy of a subset of registers for each type of exception?

1/

So to save a register, you need an address to save it to. I'm guessing on MIPS you don't get to put a literal address in the store instruction.

So you need to put the address in a register.

Some other CPUs may save the stack pointer for you, and replace it with one defined in the exception vector. But not MIPS.

So you will have to clobber one of the user's registers to build an address to save the registers to.

Hence k0.

2/

@wolf480pl @pervognsen @nick sort of. the idea is k0/k1 are permanently roped off for use of the exception handler, _especially_ the TLB miss (soft fault) handler, and ideally you don't save any regs in there at all, you just try and make do with just the 2 regs.

If you take exceptions on TLB miss, you want there to be as little state-saving around it as humanly possible.

@rygorous @pervognsen @nick

wow...

That makes sense.

Kinda reminds me of how on x86_64-unknown-linux-gnu, the thunk of PLT that calls into the dynamic linker when the address in GOT is not filled yet, and the only register it can clobber is RAX

@wolf480pl @rygorous @pervognsen @nick

well, not exactly!

it is very definitely not allowed to clobber RAX, because AL carries the count of SSE registers with floating-point arguments when calling a variadic function!

hence, Glibc moves RAX to R11 after returning from the full resolver to the asm stub, restores RAX, uses R11 to make the final jump into the resolved function

@wolf480pl @rygorous @pervognsen @nick and, going off a tangent, one of the bugs I consider quite famous, is: there's a range of Glibc versions where, if you call a function that receives 512-bit vector arguments via PLT, their upper 256-bit halves are zeroed out on the first call

(because of course the old dynamic linker has no idea what even AVX-512 is, it just saves/restores 256-bit YMM registers, and 256-bit loads are not merging into the 512-register, they zero out the high part)

(didn't happen with the even older dynamic linker that had no idea what AVX even is, because 128-bit loads _are_ merging into the 256-bit register)

@rygorous @wolf480pl @pervognsen @nick

thanks!

I should probably mention that history is not going to repeat itself if ZMM width is doubled, because the dynamic linker is using forward-compatible xsave instruction now, which dumps extended state on its own given a long-enough buffer

So if it breaks again, it will be in a new and exciting manner

@amonakov @wolf480pl @pervognsen @nick I think we're good on vector width for the next 1.5 decades at least, they pushed into 512b way earlier than it really made sense to. (Granted, which is a large part of why they then proceeded to not actually ship AVX-512 on most SKUs for the next 10 years after.)

The APX-induced GPR-breakage-maybe will hit this year, and I think our next big sorta-ABI-breaking thing is probably going to have to be cache line size.

@pervognsen @amonakov don't know! They've announced so far that this time, for real, it's gonna support AVX10.2, but Alder Lake was gonna ship with AVX512 too, right up until it didn't.

At this point they've changed their minds so many times on this that I'll only believe it when it's on shelves.

I am quite excited about getting APX for integer code though, which they seem less likely to back out of. I hope?

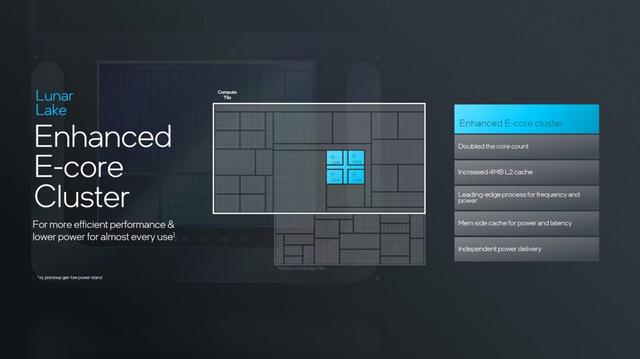

@pervognsen Plus at this point the E-cores are real beasts as well.

They're well above Skylake class by now.

If you have random data/code access or need all the mem BW you can get the P-cores are your best bet but the E-cores are actually pretty good at code that is running loops and not just chewing through mem BW and branch history entries like they're going out of fashion

@pervognsen I mean, look at this bugger: https://chipsandcheese.com/p/skymont-intels-e-cores-reach-for-the-sky

"small" core my ass