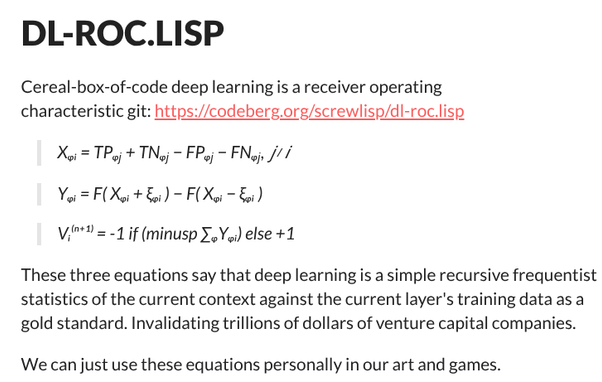

The third equation is literally

> take the mean average

> if it is negative do the negative thing, otherwise do the positive thing.

@riley Yes, thanks for the clarifying note.

I guess I want to divide claims and theories about deep learning by trillion dollar companies as being either about

- deep learning (about their battleships computer program implementation)

- and parochial company-level issues not pertaining to deep learning / battleships.

Where the latter is often misconstrued to be the former.

@screwlisp You might want to look up a 'perceptron'. It's a classic feed-forward model, built of classic ideas of what a model neuron looks like, but it's not 'deep', and it's relatively simple to understand. These were in common use way back when computing power was so expensive that 'deep' models were fanciful and impractical, but they could do some real work running atop seventies' and eighties' hardware.

Ironically, some insight from much more powerful hardware can be back-ported, allowing the old-time tiny computers run significantly more complex neural networks than used to be considered their limits when these computers were not yet "obsolete". They really can't come close to what a modern GPU or even a mere scalar FPU / DSP can do — that's a massive qualitative difference —, but they can do amazing things that would have been deep magic to Original Eighties' experienced computer-petters.

@screwlisp This is the first specific model of artificial neurons: https://annas-archive.gl/scidb/10.1007/bf02478259/. (You can tell by how broken our copyright system is by how this paper, from 1943, is still paywalled by Vinger Sprerlag.)

You'll note that it precedes the public knowledge of stored-program computers, but already builds on some ideas from 'business machines', particularly, digital / Boolean logic that was all the rage in these days. Modern artificial neurons typically don't try to be quite that discrete; they often use some form of fuzzy values, and thus, usually require floating point calculations. Curiously, it turns out that in a lot of cases, artificial neurons are not particularly precision-sensitive, although they tend to be dynamic range sensitive, so things like 16-bit floating points have been successfully used to build useful neural networks. Intuitively, something like 8-bit ulaw kind of coefficients would probably also be useful, possibly at the cost of requiring slightly more layers for some types of processing, but I don't think I've seen published results on this. I can think of several teams who would almost certainly have played with them; the lack of major published results suggests, there might be some curious issues, and I don't quite know what these are, so I can't too confidently speculate on potential useful work-arounds, but tentatively, it might well be that the sweet spot is around 10–12 bits, and in a world dominated by 8-bit octets, 16 bits is just the convenient byte-aligned size.

A logical calculus of the ideas immanent in nervous activity - Anna’s Archive

WarrenS.McCulloch;WalterPitts(1.College of Medicine, Department of Psychiatry at the Illinois Neuropsychiatric Institute,University of Illinois,USA;2.The University of Chicago,Chicago,USA) Because of the “all-or-none” character of nervous activity, neural events and the relations... Springer; Springer-Verlag; Springer New York LLC; Mathematical Biology, Inc; Springer Science and Business Media LLC; Society for Mining, Metallurgy and Exploration Inc. (ISSN 1522-9602)

@screwlisp FWIW, the canonical useful perceptron model that I was taught at the university has three layers, "potentially more internal layers if the specific case calls for them". I'm inclined to call one- and two-layer things historical curiosities that are probably not worth too much effort as individual study objects, just as steps on the way to three-and-more-layered networks.

There's a bunch of potential ways around the XOR problem; for example, one might use a reversed activation function / operate the threshold backwards for some neurons, or possibly all neurons, and treat the plain and reversed outputs as though they came from different neurons of the previous layer. IIRC, there's complications around weights adjustment procedure for the 'every activation is available in two forms' model; complications such as 'the heuristic of the neurons that fire together learn together is not quite usable in an intuitive way anymore', but I believe people have worked with such models, and worked out some ways to train them. If you're curious, I could probably dig up some papers about them.

Alternatively, your neurons might allow for negative weights/coefficients. AFAIK, this is inconsistent with genuine biological neurons, so computational neurologists tend to frown upon it for that reason, but as a mathematical model, it should work out.

A somewhat annoying thing in early ANN research was, a lot of people had great ideas that seemed to work, but often-times, it was not well understood why they worked, and so there were weird superstition-like beliefs about them. And meta-annoyingly, I kind of suspect tht at least some of my intuitive ideas about artificial neural networks are the same way.

Contrariwise, known biological neurons don't just emit one bit; they often emit series of impulses. Pure feed-forward netwoks don't do that; for obvious reasons, generating pulses out of static (or changing-slowly-enough-to-be-practically-static) input requires feedback of some sort. Unfortunately, these impulse patterns do not seem to be intuitive for common human ways to think about thinking, so researh into them is currently largely arcane black magic. But artificial neural networks can be trained to recognise and classify impulse patterns from biological neurons in useful ways, and that's one of the current bleeding edges of research into brain-computer interfaces.

@riley

Thanks!

I just used a system of a single layer of 33 neurons with my equations above: Three of them were feature neurons. In the training data, there are three outcomes: (X X O) (X X O) (X O X) and the other 30 are sort of statistical ballast.

I constructed this scenario of feature neurons:

timestep 0

(O X O)

timestep 1

(X X X)

timestep 2

(X X O)

.

The possibility of timestep 1 in a single layer model makes me wonder what is *not* possible with a single layer ANN model.

@screwlisp IIRC, the main theoretical reason behind having at least three layers is, it allows 'preconditioning' and 'postconditioning' of the signal to be done under the same machine learning regime, reducing the amount of ad hoc code to be hand-generated. For example, in the classic task of OCR, the first layer can, in a sense, deal with the character being recognised not being in the ideal location, or having the ideal size, for further processing, and generate a bunch of signals based on which the second layer can recognise the character somewhat independent to its location within the character field. If you don't do this inside the ANN itself, you'll need that much more precise code to cut the page up into boxes for individual characters; with that extra layer / these extra layers, you can get somewhat good result with a cruder hand-written character-cutting-out mechanism.

People have used mechanisms to train the layers both separately and together. Training the network as a whole tends to dominate in later works, but, well, it's not that you have to do it like that. It just often makes things somewhat simpler, and there aren't obvious major costs to it. (The minor cost is, you can kind of enforce the internal signals to be intuitively understandable if you train your ANN piecemeal; if you train it as a whole, the internal signals are oftentimes hard to understand by a human with a logic probe. But humans kind of gave up trying to logic-probe the internals of ANNs for most purposes, anyway; in recent literature, the internal signals'' meanings tend to only come up in the early introduction, when one builds the ANN by hand rather than constructing some tabula rasa and then training it.

@screwlisp One really interesting, and counterintuitive, thing about neural networks is, a lot of the decisions that can seem important to an engineer from the Before side don't actually appear to matter too much; they can be safely made in a number of somewhat different ways, and the network can stil work pretty much the same way. (Obviously, it'll have to be trained for its distinct architecture, but it can be trained on the same data, and it will work largely the same way.)

This weird phenomenon is one of the reasons why many people suspect that the things we particularly associate with human brains, most significantly, the subjective consciousness, might be able to emerge in a variety of networks of rather variable architectures, provided that their elements have certain foundational properties and that the networks are large enough.

We don't quite know what the critical properties are until we get there, though. My hunch is, the artificial neurons we currently have might be sufficient, but we're probably at least six orders of magnitude of computational capacity away from a primate-like CNS to become feasible to emulate. We might need less neurons if we made them more complicated, or possibly, if we figured out the how and why of neuronal migration in vertebrate brains.

OTOH, there's some very interesting kinds of non-vertebrate brain architectures in the nature, architectures that are much more efficient in their use of neurons. My favourite example is jumping spiders. For some species, it can be experimentally proven that they can process input comprising of millions of bits, and solve complex problems as the ethologists understand the concept, in brains comprising of only a couple tens of thousands of neurons. A couple of species have brains of less than ten thousand neurons, and still do complex behaviours.

It is not yet known how they do that, but it seems likely that mammalian brains can not do what jumping spiders do with the same neuron count. In part, well, because scientists can actually grow slices of rat brains on silicon, and we have some hunch about the complexity-of-behaviour-density that these can reach. The critical difference is not necessarily in the architecture of individual neurons, though; it is possible that the jumping spider brains have more detailed genetic architectures whereas mammalian brains have kind of been optimised for generality, with relatively few genetically built-in specific patterns. This high degree of flexibility is likely relatively rather wasteful; we only have it because dinosaurs without it used to die of #FutureShock when the world started to relatively rapidly change.

We understand some basics of how genes encode, and implement the general body plans of creatures. The best-understood part of this is the Hox, or Homeobox, gene network; it exists on pretty much all Terran creatures with a bilateral body symmetry at least in some part of their l ife cycle (there's some creatures that are only temporarily bilateral), and the fundamentals are very highly preserved. Somewhat simplifiedly, on the longitudional body axis, the body plan develops as a sort of chemical interference pattern, with genes to build individual organs, in the first approximation, activating on the basis of very specific ratios of growth factor protein levels.

It seems likely that some basic brain structures are encoded in somewhat similar ways. We do see the Hox genes' involvement in the development of the neural tube, but scientists current understanding of how this affects different brain architectures is fairly limited. #MoreResearchIsNeeded.

Some other interesting invertebrate creatures with much-more-efficient-than-mammalian brains are some molluscs, particularly octopi, and praying mantises.

As other vertebrates go, birds have brains very different from mammals, of (very roughly) comparable neuron counts, but synaptically significantly denser, and organised so differently that only a couple of decades ago, some neurologists would, with straight faces, argue that birds can't think since they don't have neocortices. Well, turns out, some birds manage to think well enough without a neocortex, and thinking that one is required for thinking is effectively an exercise in mammalian chauvinism. But we understand avian intelligence even worse than we understand mammalian one, and mammalian intelligence we understand very poorlly to start with.

On the third hand, the human way of growing brains that can do language appears to boil down to a very small number of specific 'root' gene alleles. Of the known ones, FOX2P is the most likely one involved; the most likely one to distinguish human speech from other apes' linguistic ability. Knocking it out in humans is associated with specific cognitive and linguistic defects; transgenic mice with human FOX2P become very 'chatty' (but, well, we can't yet tell if there's meaning in their chatter). We don't know what a transgenic chimpanzee with human FOX2P might sound like; scientists could arrange one, but ethicists are concerned as to whether it should be done.

A catch is, the FOX2P protein is not anything directly structural; it's a transcription factor. It up- and down-regulates dozens, perhaps hundreds, of other genes' expression. It's probably involved in representing detailed brain structure through some combination of chemical interference patterns that we can't yet interpret.

But the potential fact that a relatively small change to a high-level control gene might be able to turn complex speech capability on and off tantalisingly suggests that understanding how this works might allow ANNs to do 'true' speech, not the stochastic parroting that LLMs do.

On the fourth hand, maybe we're understanding it wrong, and what human FOX2P does is structurally what LLMs do, and the problems of LLM parroting are just that LLMs are missing other crucial parts of brains needed for cognition. Maybe LLMs would be smarter if they had neocortices? Pity that nobody knows how to build one.

Hallucinating up things that should come from parts of brain that are missing, unavailable, or knocked off, is a known phenomenon in biological brains, after all. Based on what we know, this is likely one of these emergent phenomena of Sufficiently Complex Neural Networks that LLMs and biological brains do in a relativel similar way. In clinical neurology, it's called 'confabulation'; one of the most striking examples is the Anton–Babinski syndrome in which case a person is blind because of brain damage, but the damaged visual cortex interface confabulates up enough of fake visual input that the patient adamantly and genuinely believes that they can see, even though they can't. (Confusingly, because doctors don't think like engineers, the syndrome can also cover situations in which a patient does not necessarily feel they can see, but argues it anyway, as long as they seem to believe their confabulated reasoning for why they can see even though they keep failing vision tests.) The full syndrome in one of its two main 'pure' presentations is statistically rare, but a curiously recurring condition associated with focal damage to specific parts of the visual cortex, and possibly subcortical layers. (Doctors have mapped out the specific regions whose damage can cause it, but because it's a rare condition, we don't know too much about the specific kind of variance that differentiates between Anton—Babinski and the kind of vision loss that a patient can clearly perceive.)

A well-known example of brain confabulating up visual input is the invisibility of the macula lutea. Right in the middle of eyed vertebrates' visual field — not at the very centre, but usually close to the centre — is a region where the optical nerve attaches, and shadows a substantial part of the field of vision. Yet, virtually all seeing humans' brains are inherently configured to not see that hole in the field of vision, and to just Make Something Up(tm) when trying to peek into that part; the mechanism this works by is the very same confabulatory expansion of patterns. We don't quite know for sure, but based on what we do know, this phenomenon is likely universal among vertebrates with eyes.

NULL through a progression of feed-forward layers of the kind we currently know how to model, and it needs to be specifically routed to every layer, or maybe specifically hand-interpreted. But we don't yet know for sure, either way.@screwlisp Speaking of mammals, one distinct mammalian problem is the mechanical restrictions of giving birth. This affects particularly predatory mammals, who tend to require relatively huge brains.

A common work-around is brain architectures that defer certain aspects of bulky development until after being born. Cats' visual cortexis so deferred; this is why kittens are born with eyes closed, and take quite some time to get their brain even prepped for processing visual input, and after that, take several weeks to get the visual cortex tuned and trained and pruned. (Massive pruning is probably a mammalian feature; one of the costs of having a flexible and wasteful brain architecture. All known brain structures undergo neural pruning cycles, but mammals appear to be particularly eager to grow many neurons first, and then discard those thtat turned out to not be needed.)

So, scientists have made experiments of distorting kittens vision in various ways during the first few weeks after their eyes open via specific spectacles — blocking out parts of the visual vision, blocking out different parts of the vision, mirroring, reversing, or rotating the visual field, and, perhaps most notoriously, introducing shadowy stripes into their vision. It turns out that, when done during a specific critical part of brain development, this causes lasting brain damage. Specifically, the brain seems to assume that what it is seeing during this period of development is how the eyes of its creature work, and always will be working, and prune off its ability to process visual field without the imposed distortions.

It's really sad for the poor cats. In some cases, they effectively have to wear the spectacles for the rest of their lives, or they won't be able to see right at all. But due to these experiments, we now know some details about how mammalian neural fleibility works, and have some hunches about its limits. On one hand, these results are likely relevant to understanding, preventing, and mitigating at least some subtypes of Adverse Childhood Experiences in humans. On another hand, this sort of self-directed unsupervised learning might be very useful for numerous kinds of artificial neural networks. We don't quite know how to regulate it, though; beyond some basic crude controls, anyway.

@screwlisp Some such critical periods are known to exist for human language acquisition. The brain's idea of phonetics, in particular, seems to necessarily be constructed relatively early on, and after that, one's somewhat stuck with the phoneomes they learnt early. (This is one of the major factors behing foreign accents.) On a more vague note, humans who don't acquire their first language during a specific critical window (which, luckily, is several years long) will be deprived of a substantial part of their ability to learn any languages later in life. It appears that crucial parts of their brain are just irreversibly pruned away. 'Perfect pitch' is a classic example of a skill that can be acquired through training in childhood, but generally not after the window is missed. There's suggestions that it's related to language acquisition, but we don't quite know for sure yet.

OTOH, there's a very curious class of medicines, histone deacetylase inhibitors, that, somehow — the deep mechanism is poorly understood —, appear to be able to re-open some (but evidently not all) of the critical learning windows in adult humans. The intriguing part is, these have nothing (directly) to do with neurotransmission, like many learning-related medicines do; instead, they affect gene expression regulation. From experiments, they seem to be able to temporarily increse neuroplasticity, allowing some patterns of early brain construction to be repeated. Their primary use is in epilepsy treatment and seizure prevention, and we don't even really know whether that use nd the neuroplasticity modulation are closely related effects or just happen to be triggered by a same class of medicines through a coincidence.

A lot of modern medicines are developed with some advance idea as to how they might be useful, and so, with new medicines, we often have some basic idea as to how they will be working even before the first molecule becomes ready for testing. With the most famous HDAC inhibitors, salts of valproic acid, this his not the case. The molecule was synthesised really early on, back when coal tar was the bleeding edge of medicine research, even earlier than Aspirin(r), but for many decades, it was only believed to be useful as a handy organic solvent for other medicines, not as a medicine in its own right. Even now, we know the basic idea of what it does, and we can measure the effects of its doings, but we don't understand how the two things are connected. It's not like Aspirin(r) at all — another medicine that was first synthesised, then discovered to be useful, and gradually, over time, scientists figured out most of the weird things that it actually does.. For further complication, because valproates mess with gene expression, and are relatively simple molecules capable of passing placenta, they're incompatible with pregnancy. (But it's likely that non-placenta-crossing HDAC inhibitors can be synthesised.)

But, de-digressing, what these medicines do does not have a direct parallel in ANNs, because it deals with a regulatory mechanism that ANNs just don't model. If we understood how these regulatory mechanisms work in biological brains, we might be able to build similar regulatory mechanisms for ANNs, and likely allow ANNs to learn relatively similarly to how mammals learn. Which may or may not be the same way how birds learn. HDAC inhibitors seem to work in a substantially similar way in mice than in humans, but while they improve learning in birds, they have effects on birds' brains that do not appear to have clear counterparts on mammal brains, and we don't yet know why that is, or even very deeply how this is. Even more bizarrrely, HDAC inhibitor overdose can kill, and how it does that that does not make much sense yet, either. Peculiarily, naloxone,which is primarily used to block opioid receptors and thus can reverse acute opioid poisoning, appears to also alleviate sodium valprote overdose's effects, but valproic acid is chemically nothing like opioids, and is not known to bind to opioid receptors, so #MoreStudyIsNeeded on how this actually works.

It might entirely be that the relation beween histone acetylation and neuroplasticity is just coincidental; something on the order of 'histones control the building and pruning of synapses; keeping them acetylated increases the learning reward, and thus promotes learning; if neurons get too much rewards, they start foaming at the mouth and secreting endorphines' We really don't yet know. But understanding whatever it is that histones do to a developing brain is likely useful for understanding how an ANN of useful complex structure could be built out of simple-ish ruls.

On the other hand, if we just threw enough neurons at an ANN, it might be able to do similar things — it'd just be a couple of orders of magnitude more expensive to build and run. There's some suggestions as to this possibility, but we really don't yet know.

Interpolating backwards, the jumping spiders' brain architecture might, likewise, be substantially be DNA-determined. They have histones (the generic idea of histones in cell nuclei is fairly fundamental to Terran life, actually — but there's bacteria who get by without). But there's suggestions that the relationship between their histoines and their brains might be substantially different than in mammals or birds, nd if we figured whether that is, indeed, the case, and if so, why it is the case, we'd have another important clue about structuring brains.

@screwlisp I'll need to get ready for an interview, so I'll finish on a cute note: bumblebee learning. We don't yet understand how this works; insect (and arachnid) learning appears to work very differently from vertebrate learning, but it's fun for the bees. (And fun might be an important part of learning; one that does have ANN counterparts, but only weak ones so far.)

The title might be misleading, though; while bumblebees are (so far) the only known (to a degree) species of insects to enjoy rolling balls, scarabs are known to roll (and make) balls as well, and it's possible that they, too, enjoy the process.

Bumble bees become first insects known to 'play with balls'

@screwlisp Oh, and among mammals and birds, most species that clearly play are either carnivorous or highly social. Cats play. Dogs play. Elephants play. Dolphins play. Corvids play. Wildebeest may not play. Cows may not play. Sloths appear to not play. Pandas might not play (which would be weird; most othe bear species play). Flying foxes play. Goats play, but sheep might not. &c., &c.

Bumblebees are not predatory, so if they follow the same pattern, bumblebee playing is related to the bees' social structures. If that is, indeed, the case, then one likely implications of it would be — beehive societies can probably be arranged in several radically different ways, which the bees of the hive can likely just learn to handle. Honeybee democracy might not be just a joke; it might be an #HHOS joke.

@screwlisp This book postulates an inherent connection between learning and fun. It's plausible, but it might also be that this is a mammal-centric, or maybe even carnivore-centric or primate-centric view. If this pattern holds generally, it might help explain why bumblebees play. But we don't know it fhis is a safe generalisation.

@screwlisp A potential reason why many herbivorous herding (mammal) species have to get by without play, or without complex play, might be that they can't afford the time of post-birth brain-building that kittens have: their brains need to be very nearly reaady for herding along with the adults at birth, or they'll risk being eaten right away. This probably also relates to how elephants are a long-lived K-selected species, and sheep and wildebeest species tend to be much shorter-lived species, perhaps not quite clearly r-selected, but a lot closer to r-selected than elephants.

Cetaceans might possibly kink this pattern, though.

@screwlisp ... which, in turn, might relate to the other cetacean weirdness: of being able to sleep half of a brain at a time. https://www.smithsonianmag.com/smart-news/dolphins-sleep-with-only-half-their-brain-at-a-time-81426439/

Sleep does not have a clear analogue in ANNs as we currently know them. Partly, this seems to be because some important functions of sleep have to do with the weird architecture of biological synapses, such as replenishing the transmittory chemicals that have run out, and cleaning up the metabolic waste products.

But some aspects of sleep, probably including dreaming, appear to have learning-related functions that would make sense in ANNs.

Cetacean ability to do unihemispheric sleep might mean, they don't quite hve to so sharply "choose" between being able to swim with the herd right away, and affording to be helpless babies while their brains are still being compiled.

Dolphins React to Bizarre Bubbles | Ocean Giants | BBC Earth

Dolphin blowing bubble rings

@screwlisp So, about bumblebee intelligence ...