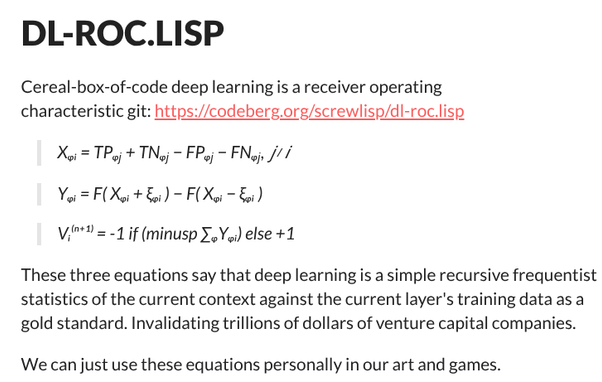

The third equation is literally

> take the mean average

> if it is negative do the negative thing, otherwise do the positive thing.

@riley Yes, thanks for the clarifying note.

I guess I want to divide claims and theories about deep learning by trillion dollar companies as being either about

- deep learning (about their battleships computer program implementation)

- and parochial company-level issues not pertaining to deep learning / battleships.

Where the latter is often misconstrued to be the former.

@screwlisp You might want to look up a 'perceptron'. It's a classic feed-forward model, built of classic ideas of what a model neuron looks like, but it's not 'deep', and it's relatively simple to understand. These were in common use way back when computing power was so expensive that 'deep' models were fanciful and impractical, but they could do some real work running atop seventies' and eighties' hardware.

Ironically, some insight from much more powerful hardware can be back-ported, allowing the old-time tiny computers run significantly more complex neural networks than used to be considered their limits when these computers were not yet "obsolete". They really can't come close to what a modern GPU or even a mere scalar FPU / DSP can do — that's a massive qualitative difference —, but they can do amazing things that would have been deep magic to Original Eighties' experienced computer-petters.

@pedromj Fun fact: ELIZA was not actually originally an AI project; it kind of got adopted by AI researchers after it became obvious how handily it tended to deceive humans. Weizenbaum developed it mostly as an exercise into what would become known as 'natural language user interfaces', and, sadly, all later applications of his work have comprised of a technically solid core and various whimsically flimsy applications. Probably a factor for why Weizenbaum had some rather profane things to say about AI hype. (And also about abusive authoritarian uses of high-power computers, incidentally.)