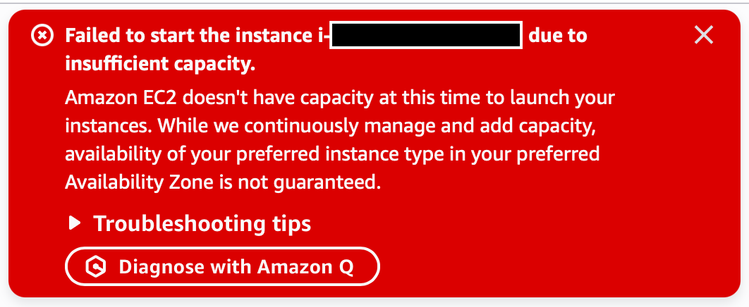

Huh, I'd never seen this before. This was for a c7i.2xlarge, which I appreciate isn’t as ubiquitous as a t3.micro or something, but I'm still surprised.

This is a good sign when it comes to the bubble.

Unlike traditional software’s near-zero marginal cost, AI workflows incur real expense every time they run (tokens/inference, retrieval, tool calls, memory updates), so margins can compress quickly as usage spikes.