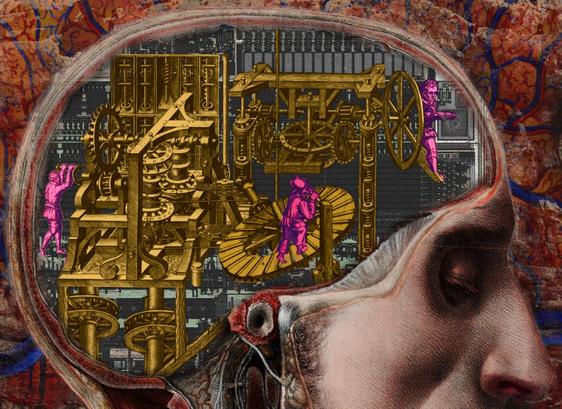

"AI psychosis" is one of those terms that is incredibly useful and also almost certainly going to be deprecated in smart circles in short order because it is: a) useful; b) easily colloquialized to describe related phenomena; and c) adjacent to medical issues.There's a group of people who feel very strongly any metaphor that implicates human health is intrinsically stigmatizing and must be replaced with an awkward, lengthy phrase that no one can remember and only insiders understand.

1/