In this blog post, I demonstrate a hands-on example of using AI tools with a legacy technology system to build the foundation for a modern software solution.

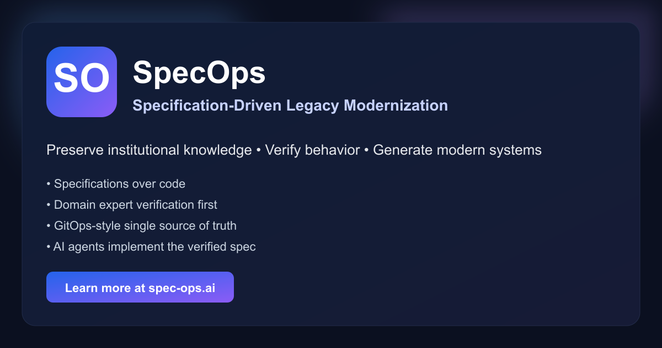

This is the SpecOps method in practice.

https://spec-ops.ai/blog/posts/reverse-engineering-legacy-app/