Analog in-memory computing attention mechanism for fast and energy-efficient large language models - Nature Computational Science

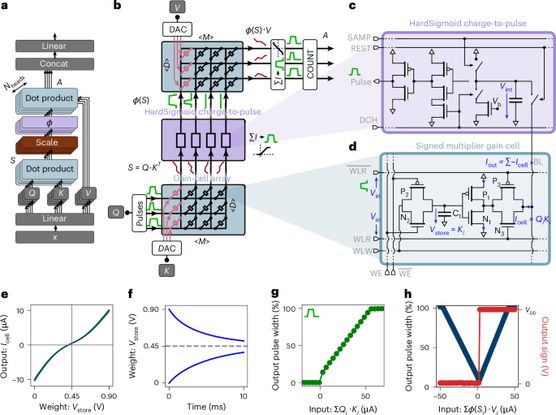

Leveraging in-memory computing with emerging gain-cell devices, the authors accelerate attention—a core mechanism in large language models. They train a 1.5-billion-parameter model, achieving up to a 70,000-fold reduction in energy consumption and a 100-fold speed-up compared with GPUs.