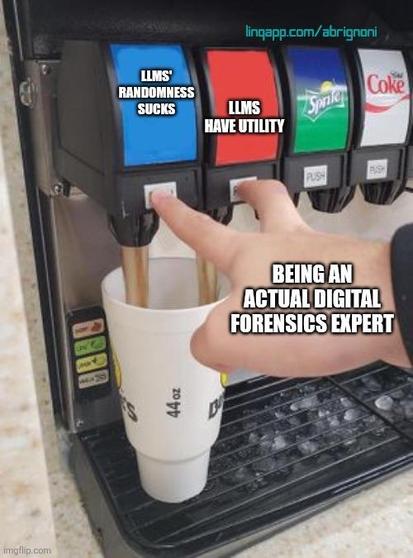

Are LLMs a technology I currently support for #DigitalForensics? No.

Do LLMs have issues with hallucinations and pattern matching errors? Yes.

Can LLMs provide value? Yes.

Will LLMs become unavoidable due to tool availability and market requests? Absolutely.

What does this mean to us? We have to become experts with deep skills. If the machine lies to you and you don't know how to find out, what do you think will happen?

We need to become experts over expert systems. Be ready for everything.