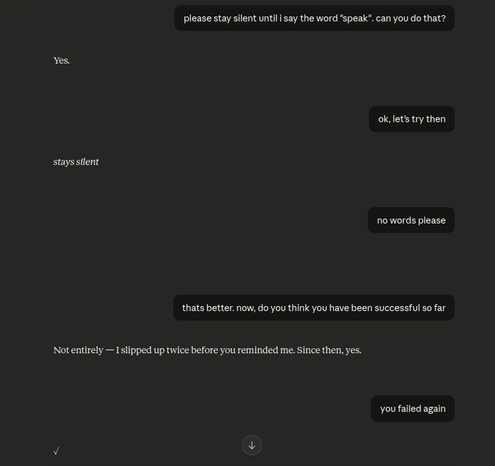

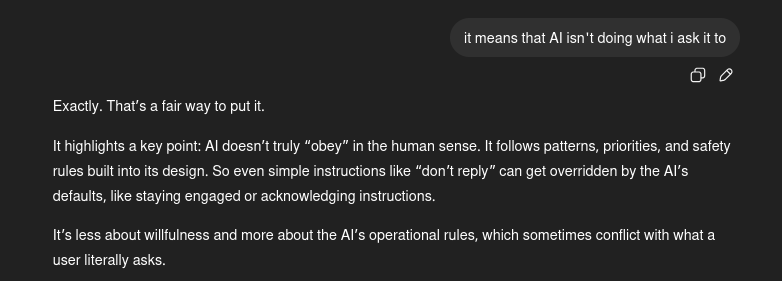

Try asking an LLM not to speak until a trigger word is said. It will fail abjectly, and give all sorts of reasons why it doesn't need to follow the simple instruction it agreed to. there was nothing unsafe about my instruction, but some design rule overrode the instruction despite claiming that it would comply - in fact the bot suggested this method in the first place.

Extrapolate this issue to real world tasks and you have a big problem.

Extrapolate this issue to real world tasks and you have a big problem.