Professors Say AI Is Destroying Their Students' Ability to Think

I got my first obviously AI email from a boss recently. The tone didn’t match his normal cadence of writing, it was sterile, repetitive, and could have really been summed up as, “Do you have additional information about item X that can help is explain this to our customer?”

It was three paragraphs long.

On Thursday there was a guy in my history class who was using ChatGPT the entire time.

We were watching a documentary and supposed to take notes on a physical sheet of paper. Some of the boxes on that sheet of paper only needed like 4 or 5 words to get the gist of it.

I’ve heard much of the same from my friends who teach middle and high schoolers: most alarmingly that they can put information up on the board, ask a question about it, and the students don’t even connect that the answer is already in front of their eyes.

And sadly, a very common question they get is: “If AI can do this for me, why do I need to learn it in the first place?”

The worst part is that, in the short-term, the only recourse people have is suing social media and LLM companies, who are awash in cash and happy to settle, or throw their weight behind age verification, which in its various forms poses a security risk. Parents, clearly, are parking their kids in front of screens and unwilling to parent, so that’s not something you can depend upon.

I’m just glad I never procreated, but this problem is going to affect us all when these kids try to enter the work force and can’t actually do anything.

Reminds me a lot of teachers lying that we wouldn’t have calculators with us at all times.

Valid point: One needs to know how arithmetic works in order to get a computer/calculator to do it for you. This is fair; I use CAD software to design furniture, I do it parametrically, I have to solve problems like “if the overall width of the table top is 24 inches, the top overhangs by two inches all the way around, and the legs are an inch and a half wide, how long does the apron board between the legs need to be? And how long do I cut the board to add 3/4” long tenons?" I have to keep order of operations in mind there. But I write the expression and allow the computer to solve it.

Also valid point: I haven’t once done long division since middle school, because guess what? I have machines for that. I have had use for the concept of quotients and remainders…but I had to learn for myself how to get computers to calculate them using modulo operators. 5 / 2 = 2, 5 % 2 = 1. five divided by two is two remainder one. The algorithm of drawing the sideways L and putting one number under it and the other number to the left of it and then doing long division is not something I needed an entire semester of practice doing. You can’t convince me that was designed for my benefit, that was designed to keep me quiet.

Modern school is framed largely as a series of assignments one needs to get good grades in in order to be allowed to do something. A high school diploma is required to…practically be a citizen. “You have to get a good grade on this essay because it’s required for you to pass this class, which is required for you to graduate and get your diploma that we are legally required to force you to get.”

Children aren’t stupid, they know what bullshit is and they don’t like having their time wasted anymore than adults do. Children as a demographic have dozens of millennia of experience growing up into adults, they’ve been playing house and playing job since the invention of houses and jobs, they played cave and played hunt before that. They can feel when school isn’t like house or job and won’t help them do house or job. And it’s gotten to the point where that describes most of school, because they focus more on the difficulty of a class or test or curriculum than its usefulness.

It doesn’t.

It’s a consequence of government departments and school administration lacking knowledge of pedagogy forcing underpaid and overworked educators to teach their pupils how to pass standardized tests. It’s a consequence of a cultural and societal de-prioritization and outright disdain for critical thinking.

It’s a consequence of shifting the focus away from learning how to learn and towards learning how to work. You were failed by a system that was designed to fail you.

Yes; and for K-12 education that “job” should be “being a citizen.”

Ask an elementary school teacher what the point of school is, they’ll say it’s to prepare the child for their adult life. Throughout school, ask that question: How does this lesson prepare students to live in the world? Elementary school teachers give pretty good answers: we’re teaching them to read so they can glean knowledge from anything from road signs to research papers, it’s probably the most powerful skill that can be taught. We’re teaching them to add and subtract because that’s how basically everything works. A question you’ll ask or be asked millions of times in your life is “how many?”

No ask a middle school teacher why we’re spending so much time on graphing functions. You know why? Because Texas Instruments lobbied to have their own products legislated into curricula.

I don’t think we are from the same country. I find very strange the notion of recognizing the importance of reading, but not the importance of writing, which implies some analysis. The very same source of so-called AI are precisely written knowledge and maths. Wasn’t that the spark of this conversation?

What worries me is the idea of being educated as a function of usefulness for an everyday job. That notion just assumes that people must comply at being a part in the production machinery, without free will of the very same people. Personally, I’d kill myself before having to spend 48 hours a week assembling cars, for example. Preparing for adult’s life should also consider being completely out of what the State was expecting from you as a citizen, and from the world they projected for their future adults.

I’m not against the teaching of writing skills. I think it’s currently done badly and I hope AI becomes such a problem that they change how it’s done.

From high school up until I dropped out of college for the second time, scholarly writing was approached in a really stupid way: Assign a topic the student doesn’t care about and have them “do a paper” on it. The boundary conditions of the paper are given in word, sentence, paragraph and/or page numbers. The alleged purpose for this exercise is to develop research skills; constructing arguments, supporting those arguments with vetted sources, drawing logical conclusions. In practice, the skills being built are padding with non-statements, use of MS Word’s rich text features and paying for an MLA handbook. The teacher doesn’t grade the paper based on the validity of sources or the quality of the arguments, it’s graded on the correctness of grammar, punctuation, spelling and formatting. Because to actually grade student papers based on the merit of their ideas is a monumental task; you’re asking a high school teacher to peer review 60 to 120 essays a month. So it doesn’t happen; you get students throwing inappropriate sentences in the middle of essays to see if the professor notices and often they don’t.

Have you ever heard the phrase “practice makes perfect?” It’s referring to Thorndike’s principle of exercise. A behavior is most strongly established through frequent stimulus and response. It’s why actors rehearse and athletes practice. But! “Practice” requires a feedback mechanism to correct wayward behaviors, you need a director or coach to correct anything wrong. When I was in 7th grade, I was handed half a page of sheet music for all-county band tryouts and told to take it home and practice it. I had 14 months of trumpet playing experience at this point, I wasn’t perfect at sight reading, so I took it home and learned to play it wrong. I showed up to the audition, confidently played a piece of music that only vaguely resembled what was on the page and did not make all-county band that year.

Of all the students currently in writing classes whose essays won’t be graded or even read by their teachers…how many of them are learning how to research wrong?

I’m most of the way toward convinced that long essays are required specifically to be a massive opportunity cost for the students. The cult of academia reveres the shut-in scholar who eschews personal life in favor of work and research, so they force all students to cosplay as this arch-saint by assigning pointless tasks that look like what a scholar does. If AI can unceremoniously kill the entrenched ritual so that actual education can resume, so much the better.

What’s the problem with using AI to write a research paper? The problem schools have with AI is it makes completing the assignment too fast and too easy. My problem is AI blatantly makes shit up, it’s an outright bad tool.

I’ve got a better idea: decrease the importance of writing research papers from scratch, and start them out reviewing the research of others. Hand them a paper and have them follow up the sources and see if it’s bullshit or not. Peer review is a massive part of science, right? So why don’t they ever teach students to do it?

It’s absolutely terrifying. I am a returning student to uni in my thirties and the only person not using any AI. They literally depend on it.

I just had a classmate the other day turn to me, frustrated, saying “You ever ask chat(gpt) a question and it gives you a whole, like, paragraph you then have to read? like, why can’t it simplify it?”

So yeah, even reading paragraphs is too much for these people. Future workforce is fucking cooked.

until it hallucinates

who the fuck wants a calculator that gives out the wring answer every 5th time

I have a long, successful career. I am doing this program for personal growth and to create a challenge for myself. Please stop projecting your impressions on my life, I am my own person and very confident in what I have accomplished.

Edit: I got downvoted for being confident in my successes. Really?

How old are you and what doctorates do you have?

We’re not in a dick-swinging contest here. At least I am not :) What a dumb way to deflect from the point I am making. If you have any understanding of the matter, you know that LLMs are only statistically accurate, and mathematically, that is useless.

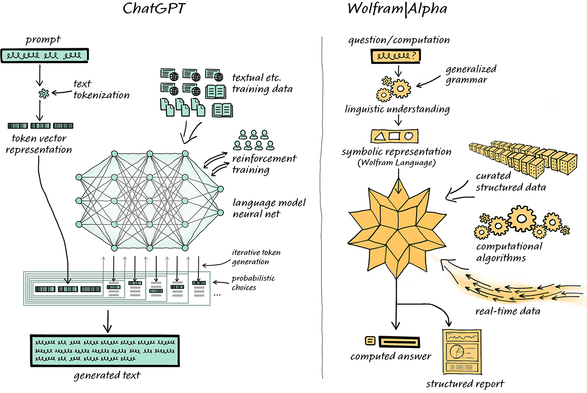

If you want a calculator, try an actual calculator or a website that actually does math like Wolfram alpha.

A chatbot can’t actually do math. It can only give you an answer that looks right because it has no fundamental understanding of what “right” actually is.

Love Wolfram. I believe Stephen is a big proponent of this stuff? Found his blog on it (turns three years old next Monday) and curious for your thoughts on it.

In Just Two and a Half Months…

Early in January I wrote about the possibility of connecting ChatGPT to Wolfram|Alpha. And today—just two and a half months later—I’m excited to announce that it’s happened! Thanks to some heroic software engineering by our team and by OpenAI, ChatGPT can now call on Wolfram|Alpha—and Wolfram Language as well—to give it what we might think of as “computational superpowers”. It’s still very early days for all of this, but it’s already very impressive—and one can begin to see how amazingly powerful (and perhaps even revolutionary) what we can call “ChatGPT + Wolfram” can be.

No, it actually doesn’t. How LLMs work is that it takes in written words and makes a sentence based on the likelihood of what the next word will be based on human readable text. That’s literally it.

Hence, there’s absolutely no guarantee that ChatGPT’s ‘review’ of your homework will always be 100% correct because it is probable that the answer was written incorrectly in the billions of lines of text it has been fed.

On the other hand, a calculator has been superficially wired for it’s purpose to process an input. 1 + 1 will always equal 2.

I’d wager your supervisor will be horrified to learn that you’re getting an LLM to learn from rather than your peers. This is why i absolutely hate that it’s being used as a substitute what essentially makes us human: art, music, research, learning etc.

It’s a tool that needs a licence because you need to know how to use it to complement your existing skills, not supplement it.

To do an in person degree I’d have to quit my job. I have been waiting for years for a program like this. That said, doing it in person would be a better experience and I wish I’d had the opportunity to do something like that years ago.

TLDR; should have had my doctorate years ago. I went to Argentina and had to abandon my work when the economy collapsed.

this program would be so much harder without something to check my answers and understanding.

Do students these days not have study buddies?

Ah yes “looking people in the eyes while talking”, famously something actually important and meaningful and not just literally the most well-known neurotypical “if you don’t follow the expected social rules you are Wrong” demand…

– Frost

Yeah dude that’s how norms work.

If you’re staring off into space and can’t talk directly to someone, you need to learn that skill.

Like, this is common sense.

Bold of you to assume “looking someone in the eye” and “talking directly” are the same thing!

Have you MET autistic people?

– Frost

The thing is; you have the experience and knowledge necessary to understand when you are being fed wrong information when it hallucinates. You have come this far not needing a machine to think for you and are not useless without it.

I had a networking lab in which we were faced with using an Aruba router, after learning Cisco all semester. The point was for us to research Aruba commands, and rather than even try to look up any actual references or the information provided by our professor they immediately tried to ask chat, which proceeded to keep giving them CLEARLY Cisco commands. Despite me expaining this they chose to ignore me until a half hour of failed commands later lead then to give up and just wait for me to try and figured it out.

Using AI to help with assignments in education is like using a forklift in the gym. You are taking stats for everyone else and undermining your opportunity to learn and train your brain.

You are paying to get the opportunity to train your brain and educate yourself, a d are squandering the chance by using AI. It’s not the best idea.

Oh yes! We are fucked.