⭐️ Had a lot of questions on & offline about how I start a new project using Codex, so here is what I do every time:

• I create a new iOS -> Universal App (Basic) using this Xcode template: https://github.com/steventroughtonsmith/appleuniversal-xctemplates

• I add my AppleUniversalCore package: https://github.com/steventroughtonsmith/appleuniversalcore

• I drop in the latest version of my CodingStyle.md: https://gist.github.com/steventroughtonsmith/ee58b8c7fe6557a073ac792bcb891267

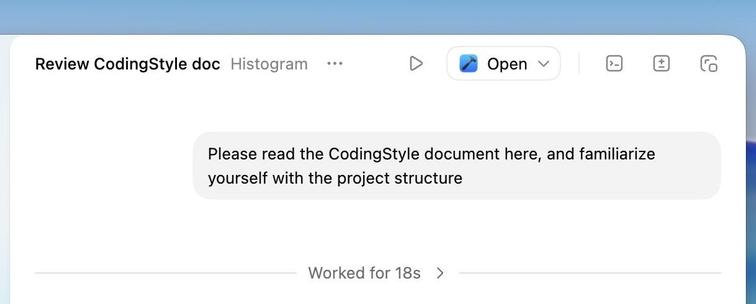

And then the starter prompt, some variation of:

"Please read the CodingStyle document here, and familiarize yourself with the project structure"