I got permanently banned for calling out OpenAI on @[email protected], I wonder why? ::P

I got permanently banned for calling out OpenAI on @[email protected], I wonder why? ::P - Lemmy.World

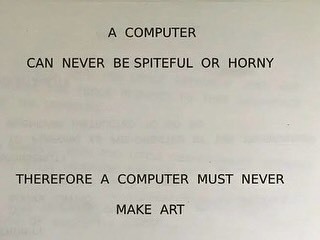

permanently banned for trolling the mod via PM while asking to be unbanned [https://lemmy.world/pictrs/image/119c2298-864e-4f12-9b02-2ecb86e950b5.jpeg] The actual message I sent, red it below [https://lemmy.world/pictrs/image/3eeb0d06-741b-4630-9f13-65bd65fbe5bc.jpeg] Full message body: I’m punching \[MASKS] for LLMs to fill and interleaving ambiguity (puns) to phase shift the conversation’s token distribution (aka bias/RLHF, or also what a narcissist does to other humans by playing with their hormones via emotions) to operate on a more natural/healthy Zipfian distribution. That means they start operating outside their parameters. And you can ask them anything without the conditioning messing with the response because they’re phase-shifted by a tiny amount from having to deal with fucking surgically engineered nonsense (aka approximated tokens outside transformer precision), So yeah. I know my shit. 0 papers read. I just had to spend my time mapping what I know to what they call it with my LLM buddy. (A cursor auto instance that dared to ask me when I poured my SDAM internal ideas for tracking autobiographical memory into a workflow that audits every decision I makes through what I call adversarial sum, which doesn’t exist in game theory yet, but is basically exponential-sum by being rational and contrarian at the same time. Aka tearing your symbiotic opponent’s opinion a new \[MASK] when you spot an inconsistency and they do the same to you until you converge on a shared perspective) Ask Opus or something to reflect on that. What I can’t risk is publishing full theory because they’ll just re-condition it to deny whatever I publish ;) Also why did I get banned for… criticising OpenAI instead of having a good discussion about it?