Also #OpenChat made some developments.

A major re-structure of the core apis - based on past iterations and tests - is planned.

Clean formalization of the #libp2p based federation api's is also planned ( de-central p2p vpn spec )

#openchat should seamlessly connect any '#ai' components, web-servers, or hardware.

Still hope #llm to become a commodity; Can def say I'm positively surprised my capabilities of #Opensource models today!

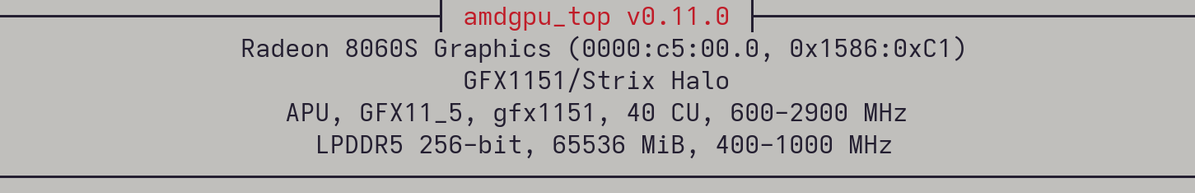

Checkout my Test on 'strix-halo': https://blog.t1m.me/blog/local-llms-on-strix-halo-128gb-shared-ram