Ars Technica Fires Reporter After AI Controversy Involving Fabricated Quotes

I’m not taking all the credit but I do hope those people who didn’t believe me in the past could rightfully take this comment, print it, pull down their pants and shove it up their ass.

It’s time to hold journalism with a higher standard and this idea that “well they do alright” and “it was only once” is bullshit sliding into madness.

Just the facts, folks.

The problem with your attitude towards this is that these companies are forcing “AI” down everyone’s throat. It’s a requirement now to churn out more bullshit than humanly possible.

This person was simply fired because they didn’t catch the false information,not because they used the tools forced upon them.

Then maybe they shouldn’t be using these tools in the first place. Other Conde Nast employees have already been blowing the whistle about this, which is funny because they used all the AI companies for stealing content.

Whether there is a news article about it or not, these shitty tools are being shoved down everyone’s throats. From developers, to authors.

Then maybe they shouldn’t be using these tools in the first place

I absolutely agree, they should not write articles with LLMs. I’m just saying they’re not absolved of basic journalistic responsibility because they’re instructed to use LLM tools.

The problem here is you are both characterizing Ars as you would other companies that have these AI mandates. Ars is the opposite, they have a mandate NOT to use AI.

While I agree a separation of responsibilities is important, they had two coauthors for exactly that reason. One trusted the other for the references, not knowing that they used AI.

Either way, the initial comment is certainly not “absolutely correct” when it comes to Ars.

A fucking moron who runs around calling everything a bit when you disagree with whatever the topic is.

It’s the new CyberTruck of online insecurity.

Hope that’s “good” for you.

and “it was only once” is bullshit

They checked and then fired the author. I don’t see how this is “it was only once” implying nothing changed and it will happen again. Isn’t firing the author “holding journalism to a higher standard” already, which you ask for?

I highly doubt that. how would that even work? a third-party to the publisher would have to check every statement before the issue goes to print. I can’t imagine this happening for anything that is not research papers or official reports.

but I happy to learn something new.

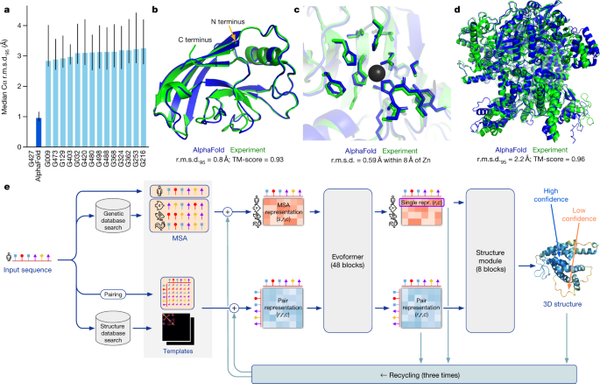

It is useful in some specific fields like protein folding:

www.nature.com/articles/s41586-021-03819-2

The problem is people think it can replace people which is wrong, it is a tool and should be used as such not as a replacement.

Or, you know, double-check that the quotes given to you by the experimental AI “quote extractor” tool are accurate?

He is (was) their go-to AI reporter. It’s not like they handed the assignment to an intern and said “go nuts.”

And the article was about AI fabricating an attack on a developer that rejected its PR.

The whole point of using AI is that its a search tool and that is the verification.

Otherwise there’s no point in using it.

And you can guarantee Conde Nast demands journalists use AI all the time.

Amazing. Just great.

Imagine being confronted for lying and just going “hey it was an accident okay I didn’t MEAN to decieve people, I just used the machine known for deceiving people and willingly put my name on its deceptions and it deceived people!” and having people defend you.

Actually, he completely admitted to and took full responsibility for his mistake; at no point did he offer an excuse, only an explanation.

To the extent I was defending him, it was because people insisted on painting him in the worst possible light, and on misinterpreting his explanation as an excuse, not because I think that everything that he did was okay.

The comments here around this were so… Off. I guess nothing was certain, but we were supposed to believe that the author was too sick to write an article, but also writing an article and using an AI “tool” at the same time.

Hindsight is 20/20, but popular defenses at the time were

He wrote the article himself, he just got mixed up when experimenting with using an AI tool to help him extract quotes from a blog entry. (He is the head AI writer, so learning about these tools is his job.) It was nonetheless his failure to check the quotes he was copying from his note to make sure that he got them right… but an important bit of context is that he had COVID while doing all this.

Yes…? I saw his comments weeks ago, and smelled something off about the… and apparently Ars determined they were lacking.

And now, “Edwards said he was unable to comment at this time.”

Sick time/PTO is a treasured resource here in the US. You don’t waste what little you might have on a silly thing like covid…

/s

I was the one who wrote that comment, and it was not an attempt to excuse all of his actions but a response to the following comment:

Someone deserves to be fired. Just imagine you’re paying someone to do a job and they just 100% completely outsource it to a machine in 5 seconds and then goes home.

Here is the full comment that I wrote, including the part you snipped off at the end:

He wrote the article himself, he just got mixed up when experimenting with using an AI tool to help him extract quotes from a blog entry. (He is the head AI writer, so learning about these tools is his job.) It was nonetheless his failure to check the quotes he was copying from his note to make sure that he got them right… but an important bit of context is that he had COVID while doing all this. Now, arguably he should have taken sick time off instead of trying to work through it (as he admits), but this would have cost him vacation time, and the fact that he even was forced into making this choice is a systemic problem that is not being sufficiently acknowledged.

I did not downvote you—my instance does not allow or show downvotes, which is really nice!—but he was sick, and he did make a mistake, and him being fired does not make either of those things false.

Also, a ton of people were piling on him in that thread, so you had plenty of company in calling him to be fired.

but he was sick, and he did make a mistake, and him being fired does not make either of those things false.

No, but those things also do not excuse his actions, which is why I said he should be, and ultimately was, fired. And I think that’s a positive thing.

Also, a ton of people were piling on him in that thread, so you had plenty of company in calling him to be fired.

The point is, plenty of people were downvoting me and defending him (such as yourself), which is what made it “controversial”. I was explaining this to the person who was confused as to why it was controversial.

I agree that these things do not excuse his actions, but there was a tendency in that thread to paint him in the worst possible light, which I felt was uncalled for.

I am said to have seen him be fired from Ars because I think there were mitigating circumstances—it is troubling that he felt the need to work while sick!—but on the other hand, given how badly he violated the trust placed in him, it is hard to see how Ars could have made any other choice.

Tbf, I didn’t read the article. But the title…

Say no more. Please

No, the worker was fired and the executive whose job title is making sure that the work submitted is correct was not fired.

The executives will get a bonus this year.

The executives will get a bonus this year.

well of course! they just saved a lot of money on wages, they deserve it!