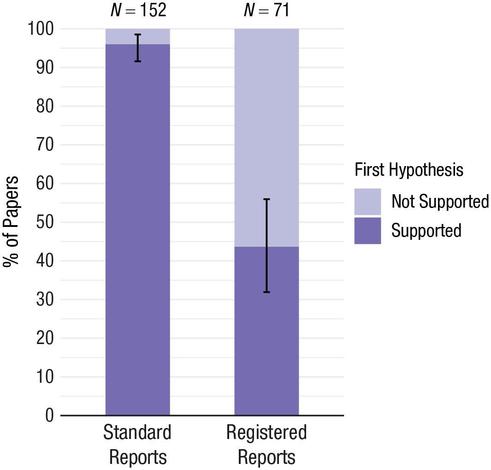

In standard scientific reports in psych 96% of first mentioned main hypotheses are supported. In Registered Reports, this is 46%. The 96% is clearly biased (given true H1 rate and power). Lack of transparency means we do not know the true baserate.

@lakens Might it be that standard reports tend to have higher statistical power? I have seen a fair number of RRs in which the results are ambiguous with regard to the hypothesis (in fact, I coauthored one such).

@Steve_Lindsay I am sure there are badly powered RRs but in general these should have higher power. However, we likely use RRs more for true nulls, and then power is 0.

@lakens Might be an interesting project, to select a sample of published RRs that reported NS outcomes and for each come up with a reasonable SESOI and calculate power to detect that effect.

@Steve_Lindsay I woukd assume every RR nowadays has a SESOI and a test to conclude there is nothing of interest? We could indeed extract it, we want to do a study on how people choose their SESOI anyway.

@lakens (some) reviewers get very confused when you have multiple hypotheses but none of them are fully supported by the data.. They want to know why the actual results are not mentioned in the hypothesis...