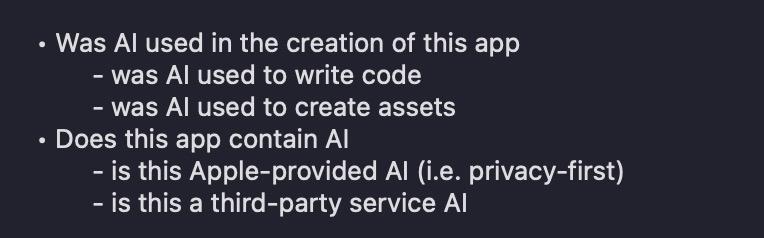

FB21948013 — [ER] Proposal: I think the App Store should have 'AI Nutrition Labels', same as privacy and accessibility ones

@stroughtonsmith I’d love to think this through. What would it look like? (Did you have suggestions in the radar?) Just what the app accesses at runtime? Or maybe including server side handling of user-provided data?AI tools in code writing? (debugging?) Sentiment analysis of AppStore reviews?

It feels like it could be valuable to people, but I’m having trouble distilling the fields. With Xcode adding code assistants, and on-device AI used by Apple frameworks, it feels easy to get tripped up.

@stroughtonsmith if the assets were ever touched by Photoshop, I’d feel uncomfortable asserting that no AI was ever involved. If I used any open source library, I’d feel really uncomfortable asserting that there was no AI-generated code in the product. If those shouldn’t to be flagged, I’d want a lot of text explaining what I should and shouldn’t consider “AI” for this.

Back to the idea that I can definitely say I don’t collect your name, and can (and intend to) fix it if I accidentally do.

@stroughtonsmith OK; I'd want that to be clear. Because that absolutely is not how several teams I've been on treat privacy labels. We perform audits and consult lawyers about them. ("Audit your entire supply chain" used to be my entire job when I worked in InfoSec.)

IMO, "a statement of intent" isn't really useful as a formal privacy label. If that's the goal, I'd just make your statement of intent (which I think would be a fine thing to do). More like "all natural" or maybe "organic."

@stroughtonsmith I think it’s more than that, having had the discussions on team about “do we know that’s true?” And working to be able to ensure it was explicitly so we could honestly (rather than aspirationally) apply the label.

But even so, what would the label say in that case? What would we be aspiring to? (With the expectation that where we fail, we would be transparent and work to resolve it?)