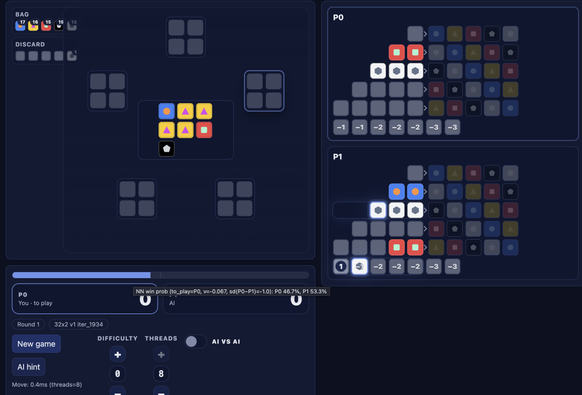

It's sort of amazing how quickly you can do things now. I wanted to try writing an alphazero-style AI for Azul. With no AI background, it took me maybe 2-3 hours to (2-3 days wall clock) to beat the best AI I could find to play against:

I don't think the AI is superhuman, but I've just been training it on a CPU on my laptop and it's not bad and measurably better every few hours, so maybe it will get there if I just let it run for longer (or if I get a real workstation)