The lossless data compression fairies are having fun with me today...

- Scan 8.5" x 11" document at 1200dpi @ greyscale

- -> 60 MiB PNG, thank you

- Open PNG in GIMP, select a good threshold point, convert to 1bpp

- -> 514 KiB PNG

- Wait... 116:1 compression from 8-bit PNG to 1-bit PNG? HOW??

- convert to pdf

- "Warning, this file is really huge and may actually be a decompression bomb" lol, ok.

- -> 515 KiB PDF, nice

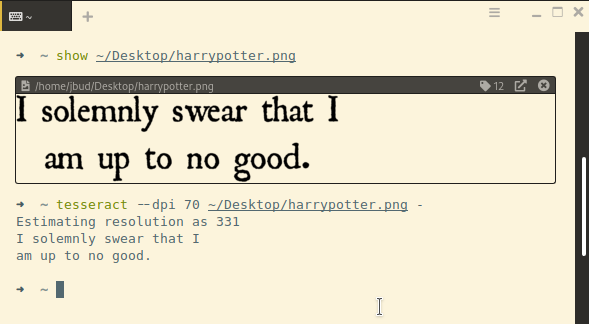

ocrmypdf foo.pdf document.pdf- -> 194 KiB PDF

- WHAT? HOW?!?

pdfimages -png document.pdf foo- -> 514 KiB PNG

- WHAT IS HAPPENING?!?

#PDF #PNG #Compression #greyscale

P.S., I found out that by default, ocrmypdf uses (lossless) #JBIG2 compression. That's why it was so well compressed. Also, the resultant PNG file at the end (which was basically the same PNG file that went into the PDF) was converted from JBIG — pdfimages converts images, it doesn't extract them in their natively stored format (but a -list will show you what the native format is). Also, I think pdfimages -all will just export the native format, whatever it is, but I haven't tried that yet.

🍵

🍵