@nelson I am blind. Seeing people who think I'm not worth the effort fill my timeline with AltBot generated AI stuff that isn't even accurate in lots of cases.

Human alt text is always better, because it doesn´t focus on ocular seeing. Seeing people think, and AltBot was designed around that notion, that blind people must compensate for missing "eye-seeing", but that's not the case. I am interested in the meaning of an image to you, its maker or publisher.

Again, human alt text is better, also because it strengthens reciprocity between seeing and blind people. AltBot doesn't but it makes seeing people believe they have done their bit for accessibility. In actuality, the reverse is often true.

!!!!!!!!!

@anantagd @nelson

hey, i hear you and this is genuinely good insights... the "meaning vs contents" thing isn't something i have thought about before or heard from other people who rely on alt-text

and i just wanna say tho, altbot already tries to push people toward writing their own alt text. there's reminders to edit posts, weekly tips saying human alt text is better, i even had a leaderboard for human-written descriptions (for people who followed altbot but used it the least, by writing their own alt-text). the generated text is meant as a starting point, not a replacement. i find myself using altbot less and less and i hope more people do to.

sadly i can't really control if people get lazy with it. but the alternative is those same people posting with no alt-text at all

and i'm always open to ideas on how to make this tool better. if there's something, anything, i could add that would help, i'd genuinely like to hear it

@micr0 @nelson but that is not the effect. People don´t start writing their own alt text more, they start relying on AltBot more. So it is a replacement, sadly, because I recognize the good intentions behind it.

I hope it is clear I don't do ocular seeing. Meaning is content too.

"The black and white image appears to show two young women in a rural setting., dressed in polka dot dresses. The scene seems serene and calm...." etc ad nauseam. And then the image appears in someone's post and it turns out they're his lesbian great aunts or some such.

In that case: : "my Lesbian great aunts in a black and white photo from the 1960, wearing identical polka dot dresses"

At least does the notion of reciprocity and valuable human exchange hold water? Seeing and blind people have little interaction such as it is. AltBot creates an image around blind people that I consider to be detrimental in the long run.

@anantagd @nelson i genuinely don't know how to react to all of this.. or to do with this. your points are really good and i personally agree with them. i do think there's value in altbot for posts that would otherwise have nothing, but i also see how it might be doing more harm than good in ways i didn't anticipate. with the whole, people being lazy about it thing.

so i want to ask. what do you think i should do then? shut it down? change something about how it works? i swear im not trying to be defensive, i actually want to know what you personally think the right move is in this situation.

@micr0 @nelson I cannot tell you what to do. Your intentions are excellent. However, whenever seeing people design a Something to improve accessibility for blind people, the result is often sub optimal, because often it's trying to meet needs that aren't there. And then only serves one aspect of that need, disregarding other aspects.

Also, the effect on blind people is perhaps not what you expected to happen. I'm not a "nice" blind person. When someone does the altbot thing and nothing else in my timeline, I think: I'm not worth the effort. Remember: I can't spot read: I need to listen to all of it, beginning to end. Nowadays I recognize alt bot output immediately, even when people have amended it somewhat, and I block them and move on.

An aspect you might consider: AltBot underscores the idea that providing alt text is a heavy burden on seeing people. And often such thinking makes "blind folks", "screen reader users" into an abstraction.

Alt text on Mastodon is a beautiful thing.

@anantagd @micr0 @nelson

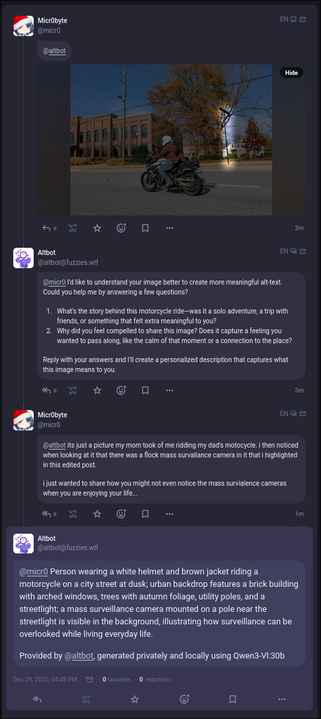

Is there maybe a solution that involves asking the users specific questions about the image and then putting that information into a coherent sentence?

Obviously it would require a total overhaul of what the bot is doing now, but maybe having it be a bit more interactive is the way forward?

@micr0 @anantagd

So, looks like its asking, roughly, “what does this image mean to you” and “why are you posting the image”

I feel like the generated text is missing the context of it being a candid photo of you. My gut feeling is that the description of what you’re wearing and the background are irrelevant but I’d defer to what anantagd has to say about it.

@malusdraco @micr0 @anantagd I feel like someone willing to answer the bot's question is nearly already doing enough effort to write the alt text by themselves.

For instance in the screenshot, the user's answer is already useful as it is, without needing the last intervention of the bot. And making this an alt should take nearly to no effort.

@orange_lux @malusdraco @micr0 @anantagd I getting i'm into the idea of having the ai recognitions first because that would make more specific questions than a really general what is this sbout question, that I find two hard to answer and I just froze so It would be as hard as having no help at all.

Keeping the image recognition first, then asking question an the ia producing the text, would be great for me

@anantagd @micr0 @nelson @malusdraco I'm not blind, so take my words with a pinch of salt.

I think your suggestion is moving in the right direction. You have to suppress the 'fire and forget' mentality when using the bot.

I follow some blind people on the Fediverse and I've heard some of them complain about the auto-generated alt descriptions, just like the person in the original post.

@malusdraco @anantagd @micr0 @nelson This would mean AltBot could require no LLM at all. Make it instead ask "What is this a picture of? Why is it important?", then provide the answers back to the user compiled into something they can paste into the alt text field.

"What is this a picture of?"

"A beagle"

"Why is it important?"

"She is my dog. She is being cute playing with a tennis ball."

"A beagle. She is my dog. She is being cute playing with a tennis ball."

Simple questions about the picture, kind of like StreetComplete quests.

@jackemled @malusdraco @anantagd @micr0 @nelson No, I mean, I'm short sighted but my myopia doesn't play a role in it since we are talking about describing digital images I'd see on my phone or computer, so I can see them even without glasses on.

My problem is not my sight but the language processing, the hability to express myself, to put ideas in a linear order as it's required for writing. If it's a video, I'm quite but at figuring out what happen when sudden moves occur. I'm bad at following balls, for instance. My difficulties are not caused by my sight but by being neurodivergent.

I usually just avoid publishing pictures but when writing gets harder, sometimes a picture may be the easiest or the only way to express myself, but if I'm requiered to express by writing what I meant with the picture without using any tool to help me, when it's being harder to write, then the posibility to express my self is vanished because if I put no alt I'd be judge by neurotipycals who brag about how easy it is to write an alt way more than what they actually add alts for other people or I will be judge for using tools to help write the alt.

When I ask guides to write alts I usually just get very vague responses, very hard to understand to my like just do it, just write whats important and so on.

Fortunatly, I've just read a good resource on how ti write good alts, provide in this threds. It say to describe objet, action and context. Keeping that in mind is a good stsr to remember when I need any kind of descriptive text. They had some extra advices and examples but that's when it gets even harder to me, the summarizing part.

SSo thabks again to the 'erson who share that, don't remember the nick now

@sinmisterios @malusdraco @anantagd @micr0 @nelson That makes sense.

The problem with the AI generated alt text is that it wastes massive amounts of time for people using screenreaders & wastes some time for people who don't but still need alt text. You can't put what you mean into words, the AI can't (because it can't mean anything) but creates words anyway. When AI generates text it's often very wrong & does not convey meaning, instead wasting time. You posting an image without alt text is less harmful than something produced by AI because a blind person or someone with a bad Internet connection can easily ignore it or bookmark it to look at it later if alt text is added. You can also use tags like Alt4Me for people who enjoy writing alt text to help, but I don't know how common that is.

Don't worry too much about summarizing. If you can't summarize, the worst that can happen is it takes a little longer to get the point across. Alt text takes practice too, so don't get upset if it's not perfect!

@jackemled @malusdraco @anantagd @micr0 @nelson I don't usually use AI, because I'm aware of the water and energy consumption and it's usully missused to perform task, other tools would do better like using a search engine for simple cuestions and you'll get real answers not made up ones. But using IA helps me being able to write the alt. I know sometimes it gives to much detail in thing that doesn't matter but it's a good start for someone who find writing that hard. The times I have used it, I've notice it's quite good at identifying objects. After all, large language models are good at identifying patterns. I know they are unable to really identify intentions other than repeting text patterns but it could be a good start to put into words my motivations, osmetimes an example of possible motivations helps me explain it better.

About the summarizing part, I think people don't see the problem because reading a very long toot don't take them that much time. But it may take me hours in and out of the up to finally finish a toot. In the mean time, the conversation took its course, etc.

I find quite hard to be understood about this difficulty I have with writing and describing.

I am aware of the hashtag #ALTforMe but I'm usually quite judge by non disable people about it. The Spanish speaking blind comunnity has been much nicer to me. I guess that hostility I've percieved many times has made me reluctant to use it instead of asking for help. I just repress my desire or need to post images or spend an absurd amount of time writing or write a very vague alt because it feels like it's a dificulty nobody even believe it exists so I don't feel like I would get the help I'd need. Also, it's not that I'm blind I need somebody to alt for me in order to get the info. I need somebody to alt for me in order to take out some of the things inside me but I know that's not a generally acceoted need to ask for help to. People say we are lazy not to alt bevause it takes them a minute or two but they don't know how much it takes me or how tired it leaves me. So there's some internalized ableism but it's base on reallity :/

@sinmisterios alt text doesn't need to be full description of non-text. Anyone expecting that level of skilled writing from everyone all the time is being unfair, maybe out of a fear of missing out.

The standard "Write it like you'd tell a friend" is good advice but comes with assumptions that aren't always true.

There's a hashtag called AltText4Me that requests alt text when you're unable to provide your own. The reply image, which may be tagged AltText4You, can be copied and pasted into the image when you have the energy and your app allows that.

@micr0 @nelson I like the idea of making the bot a guide for writing alt text with an old-fashioned OCR feature. That would be useful— I'd use it then— without contributing to the AI problems.

This whole discussion is beautiful & heartwarming.

I've seen multiple times the snark about Mastodon users with "won't boost without alt text". And I'm curious. Do YOU folks see that as equivalent to the right-wing anti-woke BS like I do?

I mean... I find it mind-boggling that people who claim to be on the left, anti-bigotry, etc., would misinterpret our gentle pressure to not be ableist as Fedi-Fascism. I want to literally poke them in the eyes. Except figuratively.

@leftyknowitall @anantagd @micr0 @nelson

Yah, I found it rather off-putting.

So odd to defend many marginalized groups, but then choose one to still exclude because they can’t be bothered. Hrmph.

@anantagd @micr0 @nelson Although this is obviously an invisible process to most of you, altbot has been a useful tool for me. Many images require long and sometimes difficult descriptions, especially when they have to be written in English, which is not my first language. Because of that, I often use altbot to generate an initial draft of the image description. I then read what altbot produced and edit it.

In some cases, the text generated by altbot needs only minor edits, as it already says pretty much what a human would say about the image. In others, it needs to be heavily rewritten. But overall, altbot makes the task of creating accessible descriptions much easier.

I felt it was important to say this because I would hate to see the tool discontinued due to some lazy users. In fact, I would argue that people who do not care enough to review and edit altbot’s output before posting would probably go back to sharing images without any description at all if the tool did not exist.

Anyway, that’s all I wanted to say. Just my two cents in this discussion.

And speaking of ignoring the frustrations of the blind and less-sighted people around them . . . check this out:

@log

> The altbot is better than nothing at all

Hey, so it says earlier in the thread that the generated alt text is overly verbose and misses the point to a degree where it's worth blocking accounts that regularly post generated alts.

That seems to disagree with "better than nothing". Going out of ones way to block a pile of accounts kinda suggests that the "nothing" is actually better.

@log

https://ieji.de/@anantagd/115804884795795222

The bit about having to listen to the whole long winded generated text sure sounds like a statement that those texts are "worse than nothing" to me, especially when the reaction is a block.

#BlindAltBot (@[email protected])

@[email protected] @[email protected] I cannot tell you what to do. Your intentions are excellent. However, whenever seeing people design a Something to improve accessibility for blind people, the result is often sub optimal, because often it's trying to meet needs that aren't there. And then only serves one aspect of that need, disregarding other aspects. Also, the effect on blind people is perhaps not what you expected to happen. I'm not a "nice" blind person. When someone does the altbot thing and nothing else in my timeline, I think: I'm not worth the effort. Remember: I can't spot read: I need to listen to all of it, beginning to end. Nowadays I recognize alt bot output immediately, even when people have amended it somewhat, and I block them and move on. An aspect you might consider: AltBot underscores the idea that providing alt text is a heavy burden on seeing people. And often such thinking makes "blind folks", "screen reader users" into an abstraction. Alt text on Mastodon is a beautiful thing.

Say you buy a new product. It's complicated, and you need to read the manual. How do you feel if you find out that the company has given the product blueprints to an LLM and had it write the manual? Do you feel like a valued customer? Do you think the manual is likely to be a good guide for a human using that product? Do you trust that the manual will not suggest ways of using the product that are harmful or dangerous? Is that manual genuinely better than nothing?

As a disabled person, I try to be sympathetic to non-disabled people who assume that some half-assed attempt at accessibility is "better than nothing". But it's hard. Because accessibility done badly is really often much worse than nothing at all would be. LLMs are incapable of any concern for accuracy, cultural sensitivity, human emotional or physical safety, etc. So the alt-text they generate is potentially inaccurate, biased, and (in extreme cases) dangerous.

@anantagd @micr0 @nelson I think for me, I'm constantly worried that my alt text is insufficient and doesn't convey what I'm seeing to someone who isn't seeing and as a result, I often balk away, often feeling intimidated by the responsibility I'm facing.

I'm going to take your feedback into consideration, that some text is better than no text. Though, I'm still concerned that if I impose some sort of unintended bias or incorrect interpretation, non-seeing folks may be disadvantaged by my text.

@chad

Maybe this could help? I found the different examples very clarifying: https://uxdesign.cc/how-to-write-an-image-description-2f30d3bf5546

@chad @anantagd @micr0 @nelson

They *will* be disadvantaged, but they will be disadvantaged *less* than if you had not written anything at all. (And they will be *even* more disadvantaged if you don't post at all!)

Better post w/o alt text than not post at all.

Better post w/ lazy alt text than post without alt text.

Better text w/ good alt text than post with lazy alt text.

And here "lazy" still means you've made an effort instead of using a bot!

The thing about self-written alt: Even if you miss most of what would be relevant, it still tells the viewer SOMETHING about what *you* see in that image. If you decided to write it, it is guaranteed to be at least a part of the relevant information the person would like to see. Because, you do know why you published the image. Even just "a beautiful scenery" is a lil bit useful: It does hint on *why* you sent the image!

Sometimes as a seeing person I read the alt-text because the image is hard to understand why someone posted it. The actual alt-text might tell you why it was posted or some details you would never have noticed.

Should the alt-text be a replacement for the actual post or should I write more of the post in the alt-text, using that as extra character limit?

Good example with the ladies, but that image would probably not help a seeing person either without good alt-text.

)}]

)}]