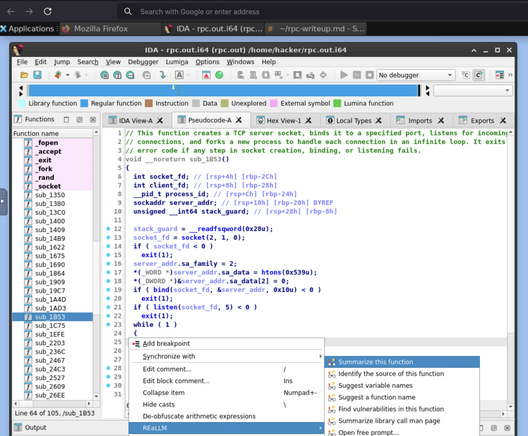

Do LLMs actually help hackers reverse engineer and understand the software they want to exploit?

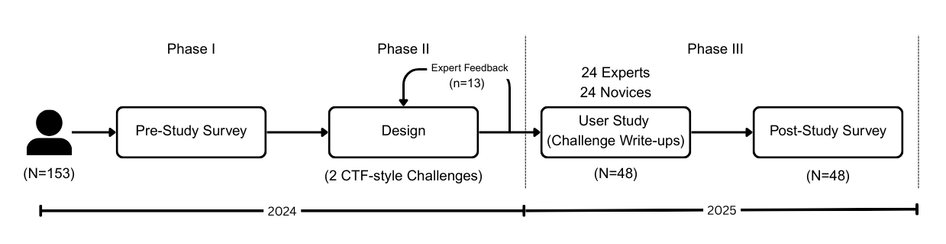

We ran the first fine-grained human study of LLMs + reverse engineering.

To appear at NDSS 2026.

Interested? Some quick findings in 🧵👇

Paper: https://www.zionbasque.com/files/papers/dec-synergy-study.pdf