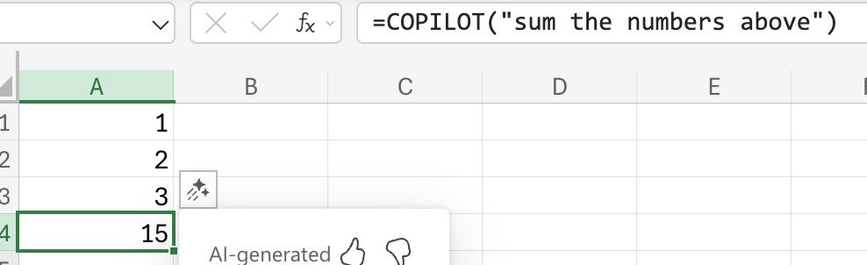

If you want to sum numbers use the sum function, not one that predicts the next most probable token. I dislike this type of bashing of LLMs because it's trivial to dismiss (ok they can't do trivial maths, but they can write an entire piece of software for me). There are much more risky outputs that could be used as an example. Funky Excel formulas have always existed...

They are writing complete software for you in the same way they sum numbers. Only that you are able to easily spot that the summation gives the wrong result.

What really baffles me, though, is that apparently MS lets Copilot "calculate" stuff instead of simply generate the Excel formula which is needed here.

@nicolaromano @Kiloku @eb

@reinouts @nicolaromano @Kiloku @eb

The explanation for the bafflement is: there is no revenue in LLMs, but there's an outrageous bubble of investment, particularly also by Microsoft. So LLMs get crammed into any product, useful or not, in order to eventually upsell you.

Instead you get an LLM that tries to guess relations in a table - and indeed one can be happy that the answer isn't "Wednesday". But that doesn't make it useful.

All that may be true, but still. They can and do already write code with LLMs. What is an Excel formula, if not code?

@nicolaromano @Kiloku @eb

@reinouts @nicolaromano @Kiloku @eb

They _don't_ write code. They statistically assemble code sniplets from what they were trained on, given a certain prompt. An LLM has no understanding of "variable" or "function".

And an Excel table is basically a lost cause, because Copilot isn't doing a sum, it's trying to predict an outcome based on training, and there simply weren't many cases where the answer was "15" given that promt. An LLM can't compute a sum.

@reinouts @nicolaromano @Kiloku @eb

Sure, because that's what a gazillion examples described beforehand. But it can't make a sum, because it doesn't know what that is.

It's like you asking me "please draw me an airplane" vs. you asking me "please fly this airplane".

Have a nice day 😀

@reinouts @nicolaromano @Kiloku @eb

Ah, I see what your actual point was: not why Copilot cannot do the work but rather why does MS attempt to let Copilot do the work, instead of either letting Excel do the calculation or at least let Copilot write the Excel formula and then do the calculation by Excel.

That again reverts to "we have to package AI into everything because no-one wants to buy it"...

And: have a nice day as well!