My students are often surprised to learn that LLMs aren’t answering their questions. Rather, an LLM answers the question “what would a reply to this look like?” It’s one of the first things I explain in the “Should I use LLMs?” portion of my syllabus.

Welp, posted this late last night then logged in and found it’d been around the world and back.

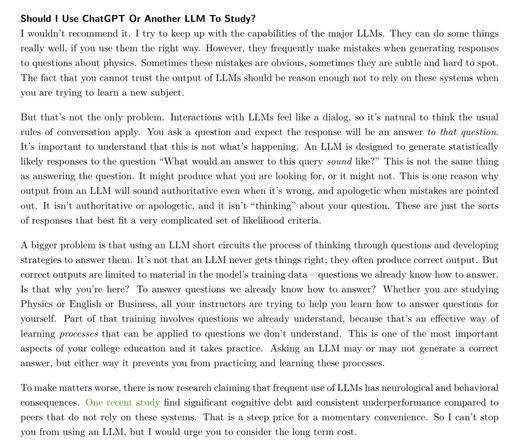

For everyone who asked, here’s the full section from the syllabus.

@Robert McNees everybody should try to ask some LLM about topics you know really well. and then go through the LLM's answers and check them. my personal experience (repeatedly) is: the answers are always wrong. wrong by omission of facts or wrong by hallucinations.

you cannot learn from these answers. LLM are not wikipedia, not even remotely. you have to check the LLM's answers and therefore you should know everything about the relevant topics beforehand. and then you wouldn't need LLM as information source.

you cannot learn from these answers. LLM are not wikipedia, not even remotely. you have to check the LLM's answers and therefore you should know everything about the relevant topics beforehand. and then you wouldn't need LLM as information source.

@jabgoe2089 @mcnees

And the LLMs answers not only are not wikipedia, etc., but they also are stripped of context since the sources are opaque.

There is no way to critically evaluate the information source (the way one used to be able to evaluate internet sources from a google search).

^^ this point is from "The AI Con" by @emilymbender & Alex Hanna

And the LLMs answers not only are not wikipedia, etc., but they also are stripped of context since the sources are opaque.

There is no way to critically evaluate the information source (the way one used to be able to evaluate internet sources from a google search).

^^ this point is from "The AI Con" by @emilymbender & Alex Hanna

@KarenCampe @jabgoe2089 @mcnees @emilymbender

These observations are why I always add, "include citations" so I can go check them.