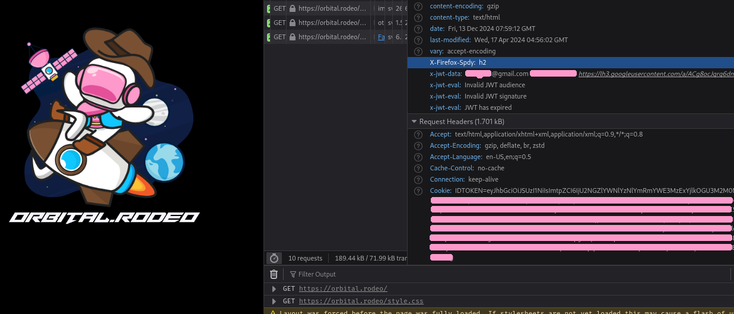

i want to build a thing that lets me restrict access to some pages on a mostly static site, mostly with haproxy, using oauth like AoC does

i think if i make a cgi thing that handles the backend POST to retrieve the tokens, i can make the server stick the returned id_token in the browser cookies and do it with zero state on the backend and zero javascript on the frontend...