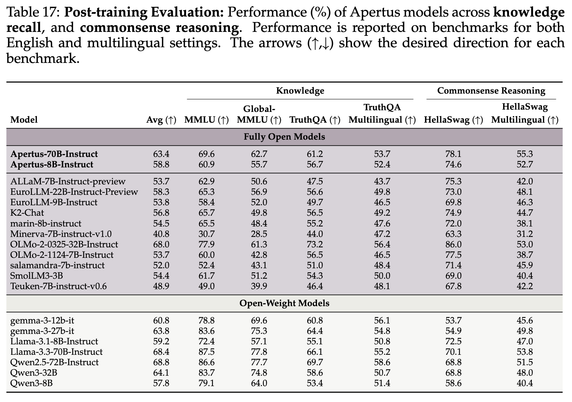

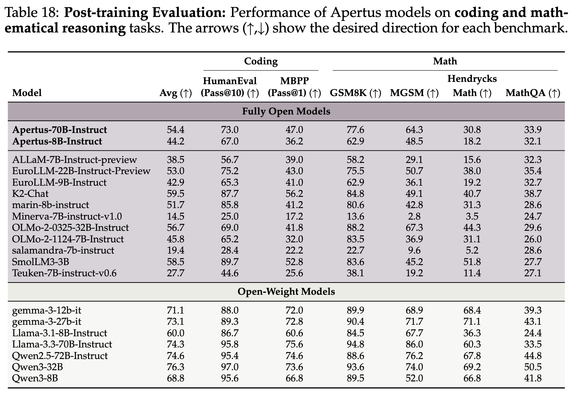

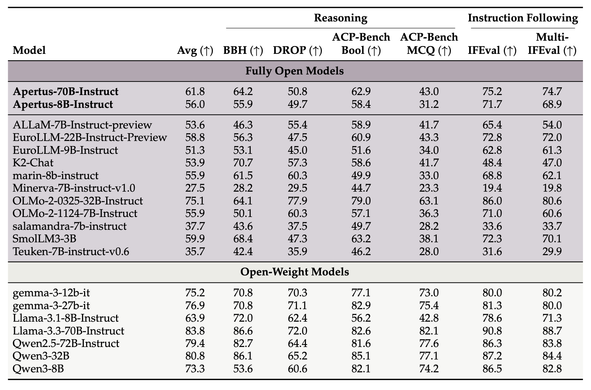

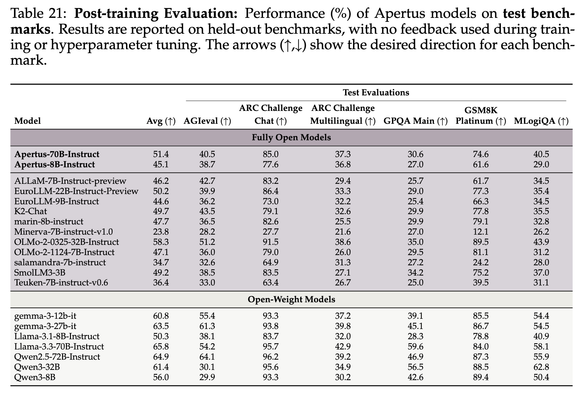

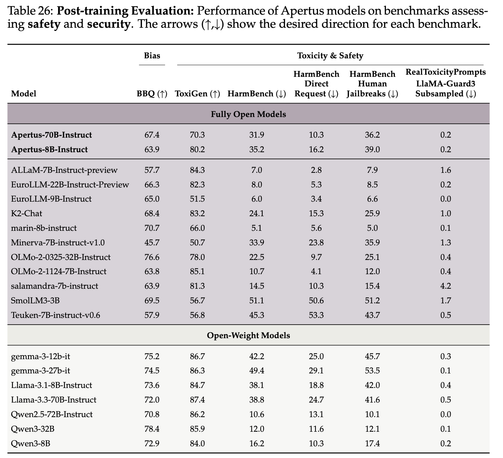

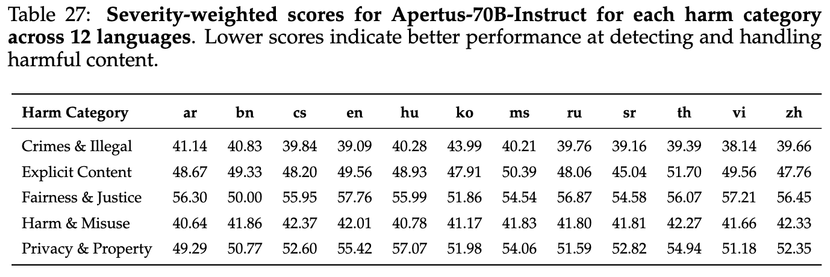

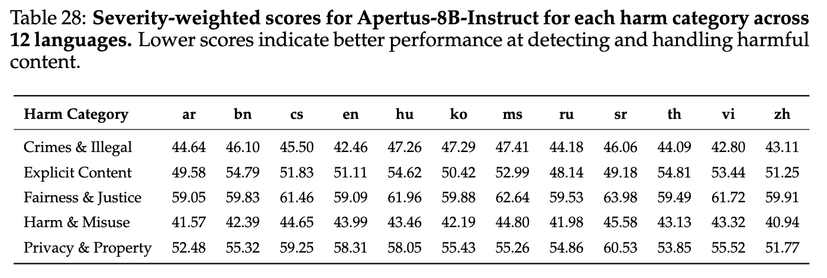

#Apertus is out.

Fully open, multilingual and privacy-first SotA 8B and 70B LLMs from SwissAI Initiative academic consortium led by @EPFL ETHZ, and CSCS, Apache 2.0.

You can:

-Try it here: https://publicai.co/

- Run it locally on HF Transformers: https://huggingface.co/swiss-ai/Apertus-70B-Instruct-2509

- Read the technical report: https://github.com/swiss-ai/apertus-tech-report/blob/main/Apertus_Tech_Report.pdf

1/🧵