@lilithsaintcrow @cstross Do you mean religions?

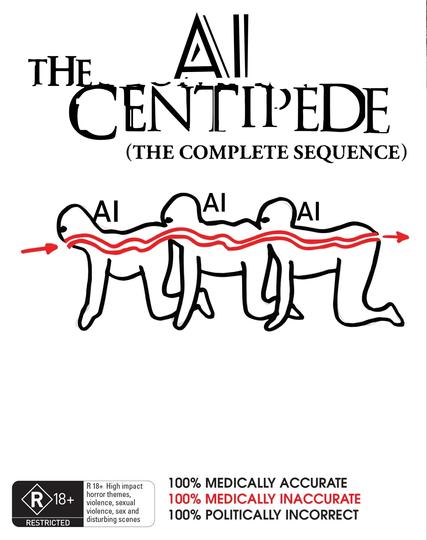

@thorne @lilithsaintcrow No. It's something we've been discussing: if LLMs are trained on data containing lots of LLM outputs, you get "model collapse"—their accuracy degrade rapidly. The web these days is full of AI slop (ie. LLM outputs), which the AI corps are slurping up indiscriminately. It's like mad cow disease: eating your species' brains is NEVER a good idea, and the AI slop acts on the LLMs exactly like a prion disease.

@cstross @thorne @lilithsaintcrow

GIGO over and over and over