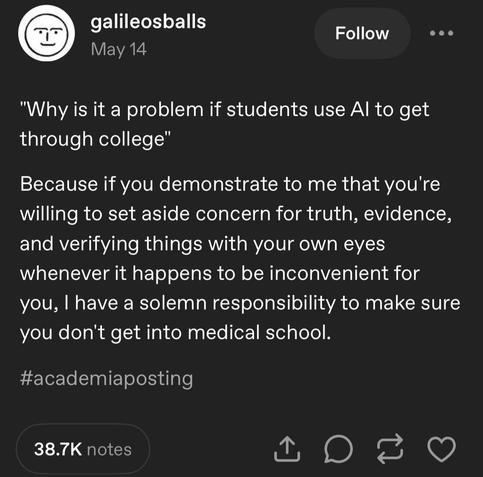

Critical thinking

trust but verify

The thing is that LLM is a professional bullshitter. It is actually trained to produce text that can fool ordinary person into thinking that it was produced by a human. The facts come 2nd.

I don’t trust LLMs for anything based on facts or complex reasoning. I’m a lawyer and any time I try asking an LLM a legal question, I get an answer ranging from “technically wrong/incomplete, but I can see how you got there” to “absolute fabrication.”

I actually think the best current use for LLMs is for itinerary planning and organizing thoughts. They’re pretty good at creating coherent, logical schedules based on sets of simple criteria as well as making communications more succinct (although still not perfect).

I’d say it’s good at things you don’t need to be good

For assignments I’m consciously half-assing, or readings i don’t have the time to thoroughly examine, sure, it’s perfect

Sadly, the best use case for LLM is to pretend to be a human on social media and influence their opinion.

Musk accidentally showed that’s what they are actually using AI for, by having Grok inject disinformation about South Africa.

Can you try again using an LLM search engine like perplexity.ai?

Then just click on the link next to the information so you can validate where they got that info from?

LLMs aren’t to be trusted, but that was never the point of them.