the talk went great and was recorded, video coming soon!

(yes, I am the very specific kind of weirdo who uses Blender as a video editor)

hello this talk is online now and you can watch it

https://www.youtube.com/watch?v=UxGxsGnbyJ4

(it's pretty good IMHO)

Implicit Surfaces & Independent Research

@mjk this is such an excellent talk! Love the parallels with tracing jits (the interval evaluation is a lot like PyPy's forward optimization pass, too, except that we focus mainly on integer and heap operations), and the reflections on independent research are great.

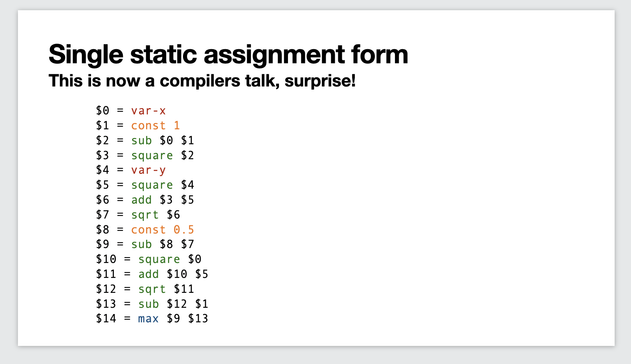

Do you do any other compiler optimizations on the linear operation sequences? Common subexpression elimination, strength reduction, stuff like that? I should just read the paper, I suppose.

@cfbolz Thanks, I appreciate the kind words!

Right now, I'm doing compiler-like optimizations on the math trees (CSE, constant folding, removing arithmetic identities), but not during expression simplification. Expression simplification only does live/dead pruning of clauses, without altering them (except that MIN/MAX can become COPY).

This isn't due to strong opinions, just that I haven't checked to see if the tradeoffs would be worth it!

@mjk turning min/max into copy could unlock further optimizations though, right? eg x-max(x,2) could be turned into 0 if x > 2.

do I get it right that you do two passes over the code, one forward to perform interval analysis, and then a backwards one for register allocation + simplification? in pypy we do the interval analysis and simplification together in a single forward pass, then do liveness in a backwards pass.

anyway, it's all really fun. I'm very tempted to play with it at some point.

@cfbolz yup, your understanding is correct 😄

There's definitely room for further optimization, and I'll think about adding simplification into the forward pass...

(It's tricky for two reasons: (1) since this is in the hot loop of rendering, the cost of computing the optimization has to justify itself with improved evaluation speed downstream, and (2) forward evaluation is mostly chunks of hand-written assembly, so adding additional logic is non-trivial)

@mjk hey, great talk!

One thing I was wondering while watching it, is whether someone has investigated using a combined BVH (or voxels) and implicit surface system.

My thinking is implicit surfaces on their own can get pretty complex to evaluate even when pulling all these tricks, but if you first partition your scene in smaller areas, you could constraint the complexity of your math in each voxel/BVH node.

@mjk I feel this could allow one to build a "Volumetric marching cubes on steroids", where the shape of the volume in each cube is not predefined by the system, but built from a bound number of math primitives.

This feels like it could allow for a simpler (more brute force) evaluation that is still fast enough for real-time use?

Check out the famous "Learning from Failure" talk about Dreams:

https://www.youtube.com/watch?v=u9KNtnCZDMI

They're using a smaller set of well-behaved operations ("edits") instead of arbitrary math, but a ton of work goes into building spatial acceleration data structures!

Alex Evans at Umbra Ignite 2015: Learning From Failure

@mjk

Yup, watched it when it came out, great talk.

(Oh God it's been 10 years since then 🫠)

I didn't make the connection with Dreams, but the fact that "ops = edits" is a good point. I'll keep thinking about this. It feels like there's untapped potential here for nice scene representation techniques

Dreams is an amazing price of technology, it is really sad that it is locked behind a platform and does not have support anymore.

@mjk This talk is a pretty happy discovery for me! Good fit for my pet problems :)

Super well put too, ty! 💜