Speaking as a Fancy Computer Science Professor at a Fancy Institution of Higher Education who teaches the course on Programming Languages:

I endorse @vkc’s position here 100%.

HTML is programming.

Veronica Explains (@[email protected])

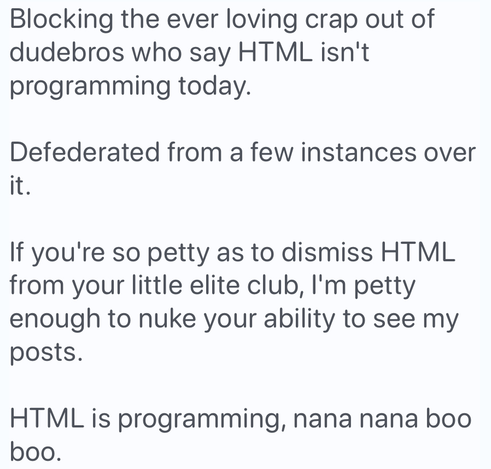

Blocking the ever loving crap out of dudebros who say HTML isn't programming today. Defederated from a few instances over it. If you're so petty as to dismiss HTML from your little elite club, I'm petty enough to nuke your ability to see my posts. HTML is programming, nana nana boo boo.