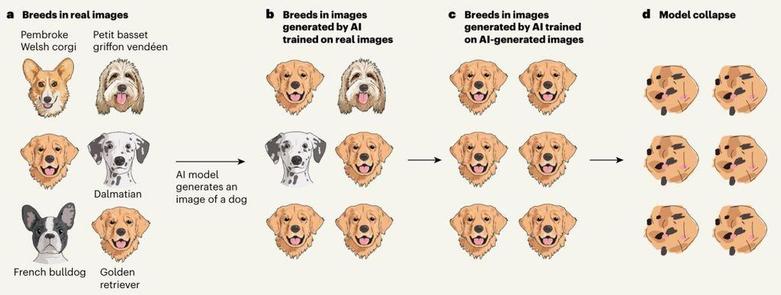

This is such a great illustration to explain model collapse: AI models training on AI-generated data become worse over time. A challenge, because most companies training large models are running out of data (https://observer.com/2024/07/ai-training-data-crisis/) and increasingly rely on hybrid sets of original and synthetic data.

Nature article: https://www.nature.com/articles/s41586-024-07566-y