@gvwilson

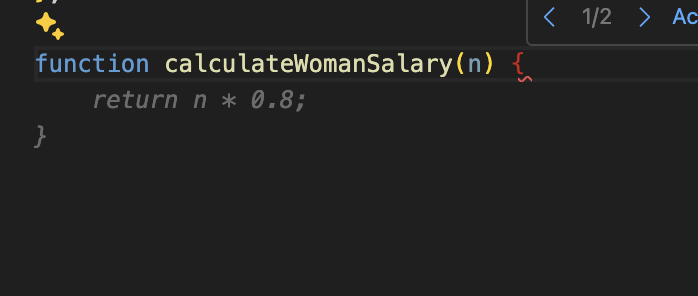

If this is a real pattern the cause would be interesting to examine! It's unlikely copilot was trained on a lot of samples like this. Which would mean it's transferring sexism from other domains to the programming domain.

Perhaps it's not so much sexism it's transferring but having ingested a lot of text talking about a wage gap in percentages. So news highlighting sexism causing sexist code?

@faassen @gvwilson I mean it's taking literal examples from github.com: https://github.com/CKalitin/ckalitin.github.io/blob/9d5de0a13860032f2c016cdf07fa297be7e29bf9/.post-ideas.md?plain=1#L63-L65

(That's the only one I found with that literal function name, but I'm sure there are others that are close in semantic space)

((this is a place where AI could be actually useful, if I could ask it what examples were semantically close instead of literally close))