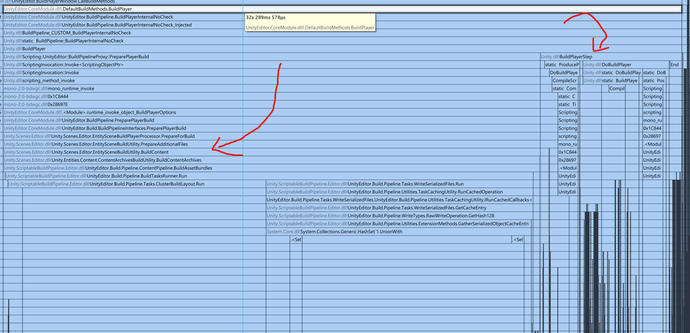

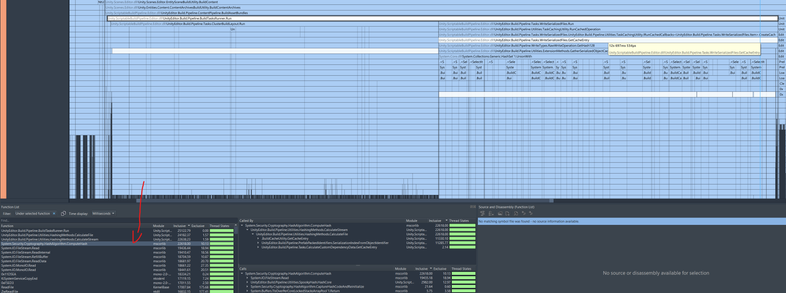

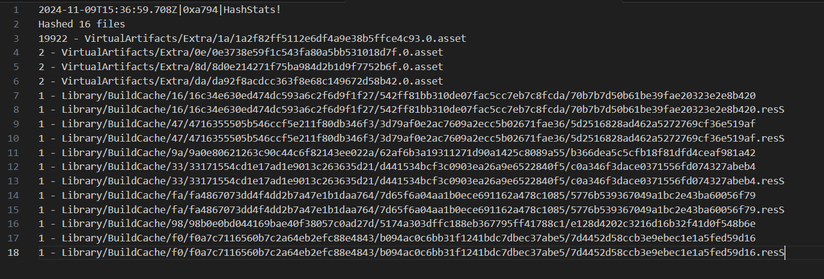

Inspired by some recent work build times, I was looking at Unity's "MegaCity Metro" sample in Unity 6. I got curious about at its build times. In particular for incremental builds (fancy speak for "I pressed the build button repeatedly but only made minimal changes inbetween.")

In my case, the "minimal changes" are actually "no changes at all." I just pressed the button repeatedly, because I saw that a lot of what I saw when making minimal changes is also present without any changes.