My last session of #AoIR2024 was incredible which is not surprising as it was put together by @qutdmrc's excellent Tariq Chocair. It highlighted multiple practical and normative aspects of the use of LLMs for text classification in the field of internet research. It was kicked off by the fabulous @AhrabhiKat speaking about bias observed in LLMs and human coders when dealing with various constructs of marginalisation (including racism, hate speech, and more)

Ahrabhi and team start from the premise that humans disagree on what represents marginalisation in texts due to their positionality (e.g., how often they are targets of racism). This may be seen as a problem when trying to create a gold-standard dataset on these topics, as we would normally want to have a good agreement between human coders that is supposed to reveal "the ground truth". Ahrabhi suggests that we should instead accept the variance and sacrifice the intercoder reliability score.

Of course, we are also interested in how LLMs deal with the same task. Ahrabhi's findings suggest that variance, or disagreement on marginalising topics also exists with LLMs, driven by model selection or personas that researchers may assign to LLMs. However, in some areas, LLMs are better than human coders at picking up subtle instances of marginalisation. I could write more about this paper as I find it fantastic, but it's better you read it for yourself: https://osf.io/preprints/socarxiv/agpyr

Next up is Bruna Silveira de Olivera, who reflects on her use of LLMs for identifying complex variables in texts of manosphere podcasts. Bruna's approach allowed her to find targets and perceptions of harm in the false struggles for recognition by the manosphere groups. This success is important because podcasts are long and numerous, but with this specific topic, are also toxic for researchers to engage with directly - an important benefit of LLMs I have not considered before.

Next, we have Tariq, the panel convenor himself, speaking about the use of LLMs for stance detection in political texts across platforms and languages. This is emergent research so his suggestions are about building the research pipeline by performing small sample coding, conducting discussion amongst coders, and iterative changes to prompts and models. Tariq calls for reconsidering the notion of the ground truth as discursively constructed by humans and language models (of all sizes).

Tariq is followed by @hendrik_meyer who presents an elegant pipeline incorporating human codebook construction, LLMs for small sample to save environmental, financial, and normative costs, and classification of the larger sample with SetFit (a framework leveraging transformers for prompt-free few-shot classification). Find this promising framework and the model that focuses on climate protests, here: https://huggingface.co/cbpuschmann/MiniLM-klimacoder_v0.1/blob/main/model_head.pkl

And last but not least, @fabiogiglietto presents his insights on validation of LLM-in-the-loop approach. @GiadaM and him recently had a paper on this - check it out here https://sociologica.unibo.it/article/view/19524. Some of the provocations Fabio shares in reflections are as follows. First, LLMs are a multi-purpose (multiverse?) tool, so researchers have to make a lot of choices. Often there are no conceptual guidelines to assist with this (for example, if we do clustering, how small or large clusters should be?)

In addition (and this is a feeling shared across all presenters), there are tasks at which LLMs may excel over humans (Fabio calls this "the expertise paradox"). For example, LLMs may be able to access a cryptic piece of knowledge about a politician that human coders may not be aware of. Of course, this raises a question of how can we even validate these tools that are seemingly smarter than us (at least in some instances).

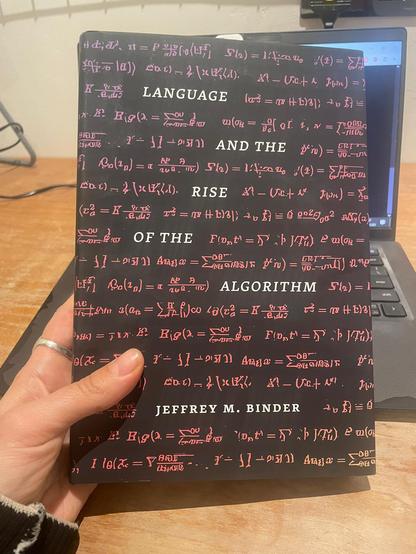

I will be contemplating over this paradox as I read this book I got from the kind @josephcalamia from UChicago Press. The book is much needed as I lost a pen from my e-reader (anyone seen it? #AoIR2024), decreasing my plane entertainment options. But in all seriousness, grateful for all the learning - this has been an incredible intellectual experience 🙌

@kkasianenko_at_aoir Thanks a lot for sharing! Was great to meet you and have a nerdy talk next to a bumber car ride 😅

@AhrabhiKat The pleasure was mine, hope the summary did justice to your fantastic paper. Stay in touch!