LinkedIn is now using everyone's content to train their AI tool -- they just auto opted everyone in (except folks in the EU, it seems)

I recommend opting out now (AND that orgs put an end to auto opt-in, it's not cool)

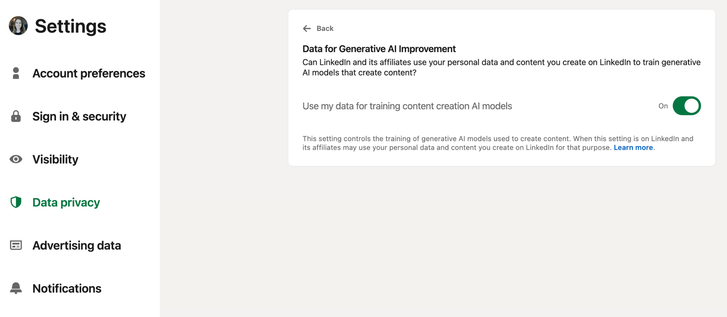

Opt out steps: Settings and Privacy > Data Privacy > Data for Generative AI Improvement (OFF)

We shouldn't have to take a bunch of steps to undo a choice that a company made for us.

Orgs think they can get away with auto opt in because "everyone does it".

If we come together and demand that orgs allow us to CHOOSE to opt in, things will hopefully change one day.

LinkedIn seems to have auto enrolled folks in the US, but hearing from folks in the EU that they are not seeing this listed in their settings (likely due to privacy regulations).

If you're outside of the US, I'm curious if you're seeing this?

Why does opting out of training generative AI models matter? How does it impact folks?

Well, generative AI tools build outputs based on inputs they are trained on. AI tools have a hard time synthesizing new content so allowing AI tools to be trained on your original writing, photos and videos means it’s likely that elements of your writing, photos, or videos will be melted together with other people’s content to build AI outputs (without credit to you).

In short, you may find your original writing, photo, and video content “reused” or “rehashed” by AI, and *sometimes AI plagiarizes writing, photos, and video in their entirety*.

Opting out of participating in AI training is a good idea for anyone who creates original content.