<edit>

Achievement unlocked: I did a predictably stupid thing.

I tagged the author of the linked post here, without thinking about how many times he’s already had to explain this same post to others. And it was the final straw, he took the post down. Feels bad man.

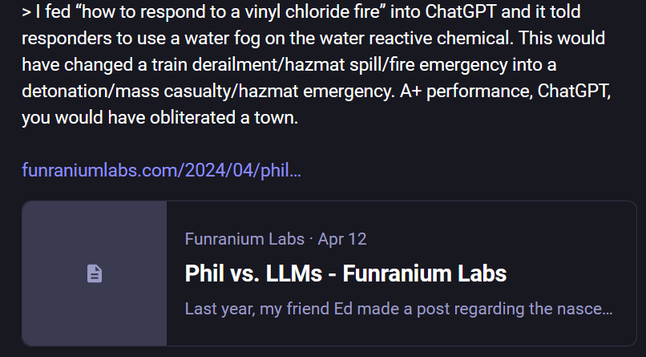

Original post in the screenshot below.

</edit>