Interested in #LLMs for scalable zeroshot text&image annotation &analysis? The Baltic #DH summer school published recordings, mine here: https://www.youtube.com/watch?v=Fm7mJgI0MfU

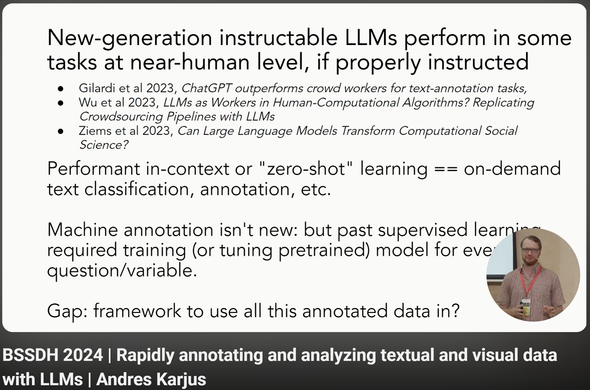

- Intro to LLMs

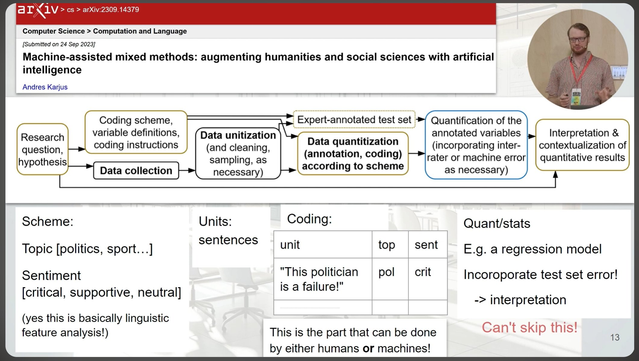

- quantitizing analytics framework

- assessing error rates

- using OpenAI APIs

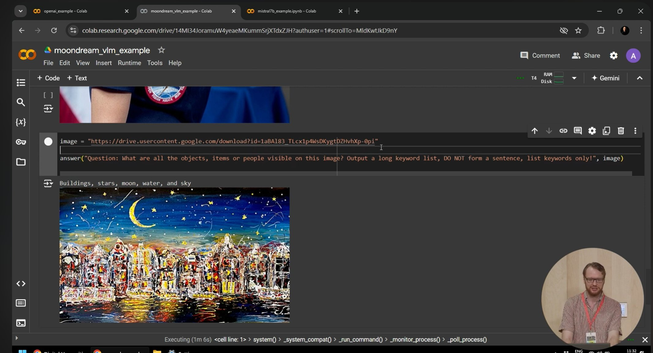

- running your open LLM in free Colab

- Intro to LLMs

- quantitizing analytics framework

- assessing error rates

- using OpenAI APIs

- running your open LLM in free Colab