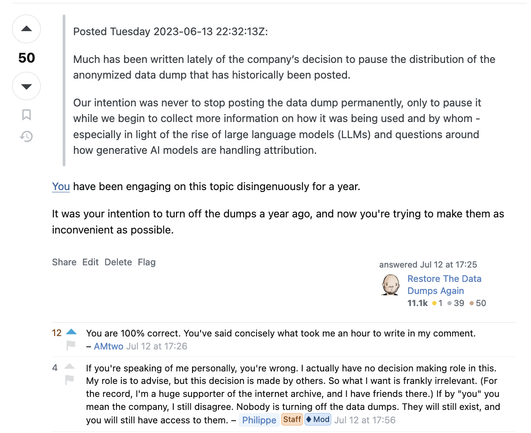

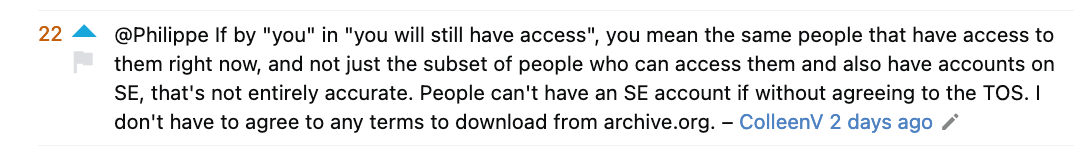

A tiny joy from the latest Stack Overflow footgunorama is when the mouthpiece chimed in to question what I meant by "you" (and stridently affirm his role as mouthpiece)...

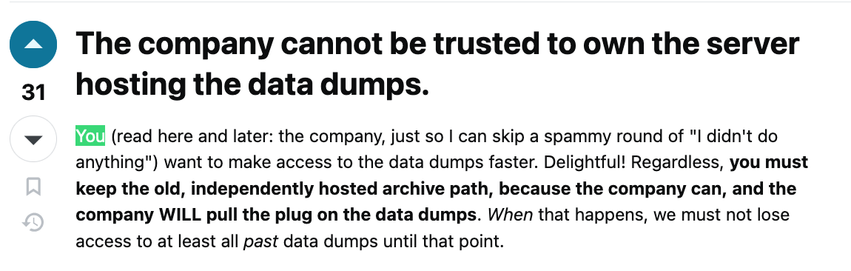

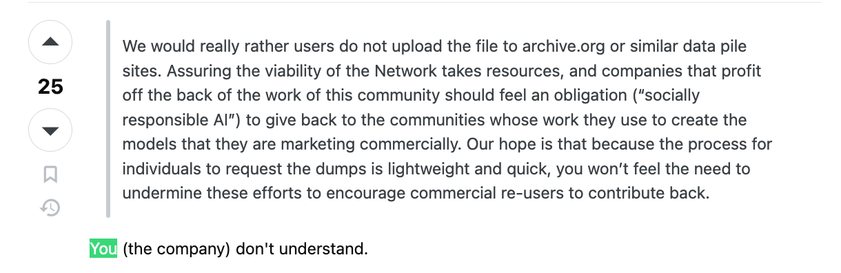

Stack Overflow needs to differentiate their product. So they're changing the public data dumps to be objectively worse. Step 1 is "Yeah you can still download same dump, you just have to do it in hundreds of separate pieces from hundreds of separate websites."

It's purely to make sure OpenAI and Google keep paying for the streamlined version of the dump.

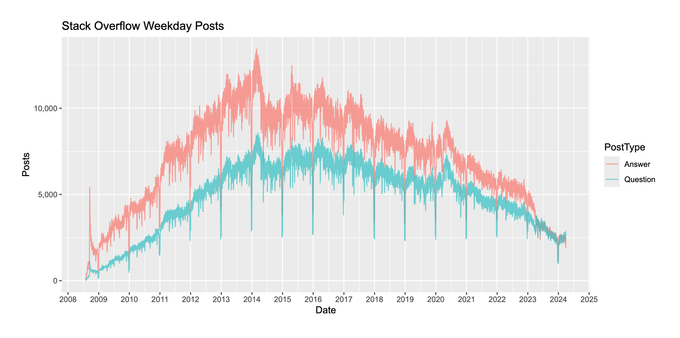

It won't work because Stack Overflow is dying (and this incident will accelerate it). As of the last data dump there were some 24 million questions. According to data from the API recently they get 2000 questions on a weekday, 1000 on a weekend day.

Right now the data dumps likely contain ~95% of all the data they will ever contain. There's no value in subscribing to such a data product long term.

Aw, you made that! I owe you a thank you, then. I used that to whip myself up a simple interface for searching those dumps locally (works with all but the largest at the moment).

https://codeberg.org/Clew/MetaStack

(and here's a demo site) https://stack.clew.se/

Happy to!

The story was basically seeing your dumps and thinking they were so cool that I basically had to do a project with them. ;)