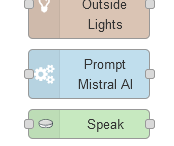

Man, I'm obsessed with integrating #MistralAI models into my #homeautomation and #homelab operations. Even set up a custom #node in #NodeRed to prompt the #AI. All my alerts are very personalized now and I love it. <3

@wagesj45 how do you run mistral? (mixtral??)

@Mawoka @kellogh Oh its still plenty fast. Just not realtime speaker-assistant level fast. It can take a long prompt and generate a response in as fast as 30 seconds for an alarm with all my calendar events for the day, or up to a minute or two with a prompt with a few hundred sensors and their states.