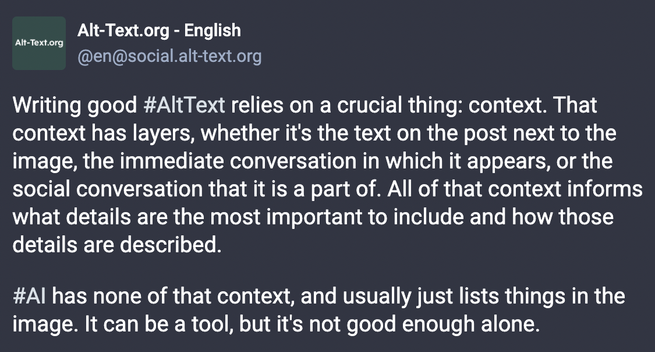

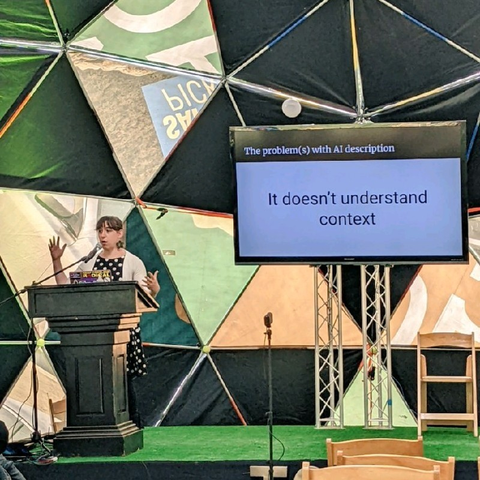

I built possibly the earliest same-tab tool for getting AI image descriptions in Aug 2022, using a then newly updated Microsoft AI API. I listened to Disabled folks about it. I've payed close attention to the subject since.

My criticisms of @mozilla plans for Firefox built in AI alt text generation aren't because of knee-jerk AI hatred or some ignorance. They are because I understand this to a breadth and depth extremely rare and I want acknowledgement of major issues being ignored 🧵…

Boost? 💜