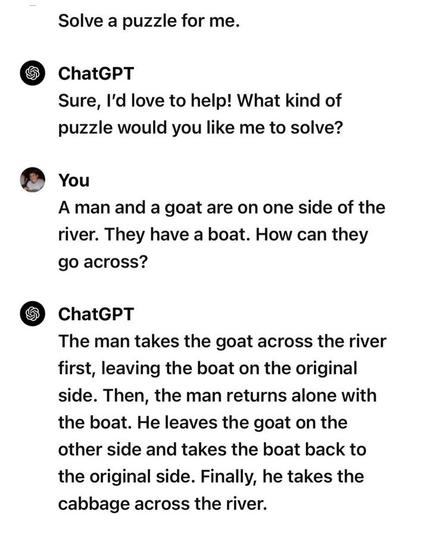

@devopscats Proof #467198245 that LLMs don't actually understand anything, they're just fancy phrase generators.

@arina @devopscats it's trained to produce statistically similar outputs to its training data. If they put more "anti-questions" in the training data it'd produce more appropriate answers. What does "understanding" even mean? Suppose I asked this question to someone who had never seen a boat, or a goat, would they understand it? Most of us recognise the question as implying a row boat, but few of us have ever rowed a boat, and I bet none of us have tried to row a boat with a goat. Anyways, I'm going to eat some green eggs and ham.

@quantumg @arina @devopscats this model is trained on more data than a human brain could ever possibly absorb within a human lifetime, and yet it still can't solve an incredibly simple logic puzzle. if your solution is to throw more data at the problem, which up to this point has clearly not worked, then you fundamentally misunderstand what this type of tool is useful for.

@spinach @arina @devopscats I don't know why you feel the need to dunk, but I suspect it's because you've been trained to behave that way. I'd argue that the average human being experiences an Internet worth of data every few minutes. It's also in a social context which is constructed for us and evaluated by other people who are trained in the same context.