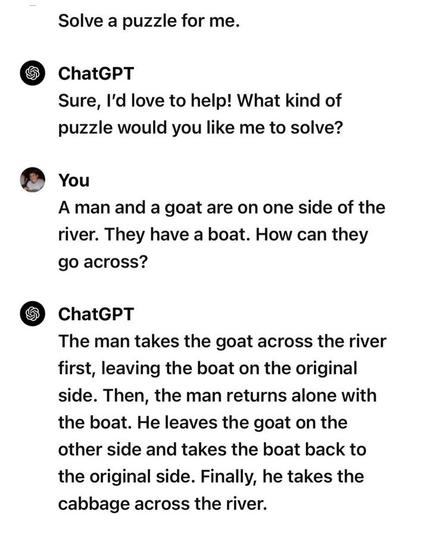

@devopscats Proof #467198245 that LLMs don't actually understand anything, they're just fancy phrase generators.

@arina @devopscats it's trained to produce statistically similar outputs to its training data. If they put more "anti-questions" in the training data it'd produce more appropriate answers. What does "understanding" even mean? Suppose I asked this question to someone who had never seen a boat, or a goat, would they understand it? Most of us recognise the question as implying a row boat, but few of us have ever rowed a boat, and I bet none of us have tried to row a boat with a goat. Anyways, I'm going to eat some green eggs and ham.

@quantumg @devopscats What I mean is, they don't understand that there's a boat and a goat. It is not translated into concepts the way a human mind would.

@arina @devopscats we don't have any idea how a human mind constructs concepts. Let alone any particular human mind. LLMs do indeed translate words into "concepts" and we know this because their internals can be interrogated. If we give it a few different sentences about goats there will be similar vectors in the different computation. What's more, we can intervene, changing the goat vectors to look more like cat vectors and the output will be cat-related. Sentences about goats eating socks will become about cats eating mice, even though we provided it nothing about mice, because it has encoded in it the more likely relationship.

@quantumg @devopscats We don’t know how human mind constructs concepts, but we know that it does, because we can reason about them.

We know LLMs don’t construct concepts because they tend to hallucinate or forget concepts introduced previously.

We know LLMs don’t construct concepts because they tend to hallucinate or forget concepts introduced previously.

@arina @devopscats I get what you're saying. There's something concept-like going on in LLMs but it's not the full concept 😅