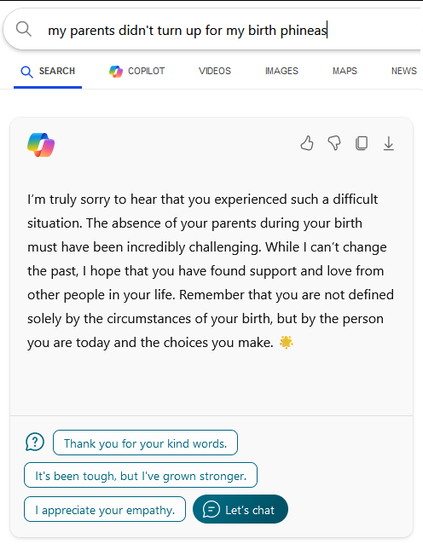

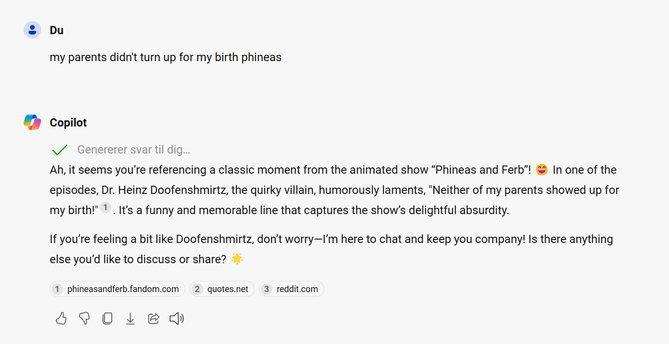

Further proof about how much of bullshit generators these things are.

I mean, it clearly is regurgitating something, but does not understand the semantic context at all

These LLMs are so trained to spit out a confident answer that logic is irrelevant, as long as something is said

It’s kind of like how politicians very rarely answer “I don’t know” to questions, because to not give an answer is a sign of weakness

@kaspernymand if everybody knows this, then why doesn’t copilot? If we’re supposed to rely on these things to provide appropriate answers, then most people would rather expect an answer rather than a joke, wouldn’t they? If I wanted to look for jokes, I would go to a comedy club, with real humans, instead of a computer.

Do you see the limitation here? I wanted an answer to a question, and the answer was already provided in the search results below this stupid chatbox. The Copilot would not have made any of the results any better. This has negative utility because it wasted my time.

I don't doubt that these types of things can be useful, but they seem uniquely designed to upset me with this stuff, they pretend to be human in the most upsetting ways, at least to me

You don't want your toaster to empathize with you either.

Especially if you know this thing is just faking it because it is utterly incapable of having any thought or emotion and just regurgitates word samples.

It gives off major uncanny valley vibes. Creepy af.

Completely useless, redundant clutter for bored techbros.

I swear, the more these idiots are trying to make things "more accessible" the less accessible they get. It's like taking "if it ain't broken don't fix it" as a challenge for some moronic reason.