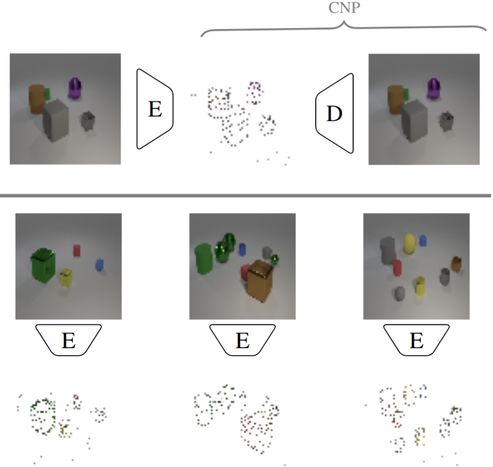

Interested in representation learning and Conditional Neural Processes (CNPs)?

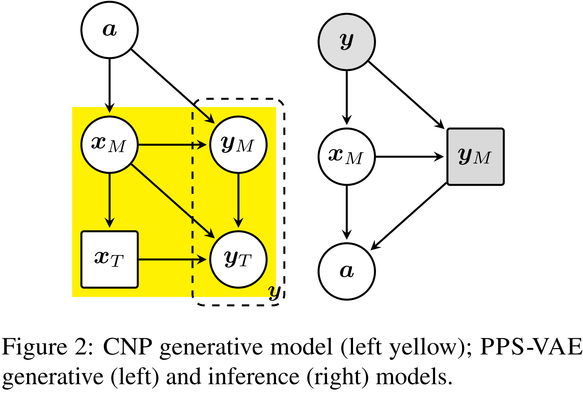

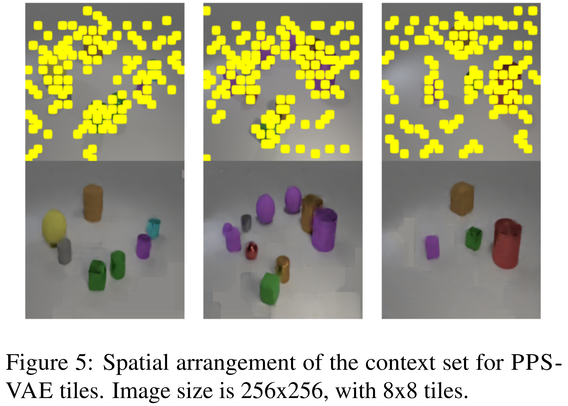

Together with Victor Prokhorov, Siddharth N and Ivan Titov we propose Pixel Space Variational Autoencoder (PPS-VAE), an amortised variational framework that casts CNPs context points as latent variables.