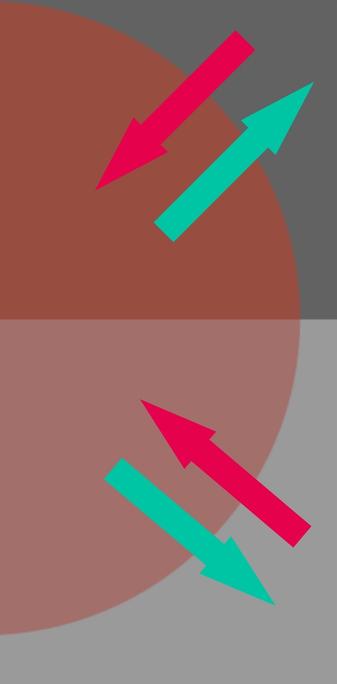

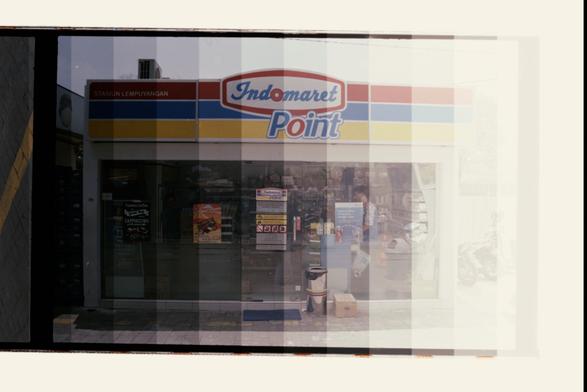

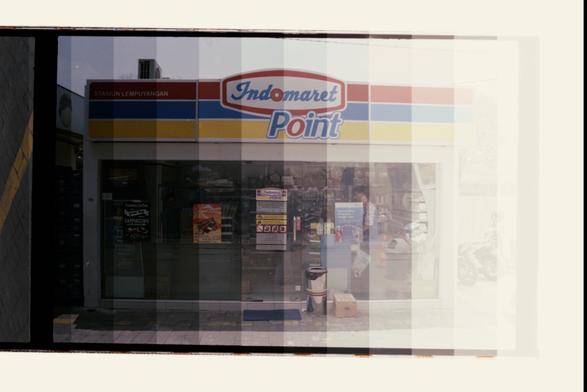

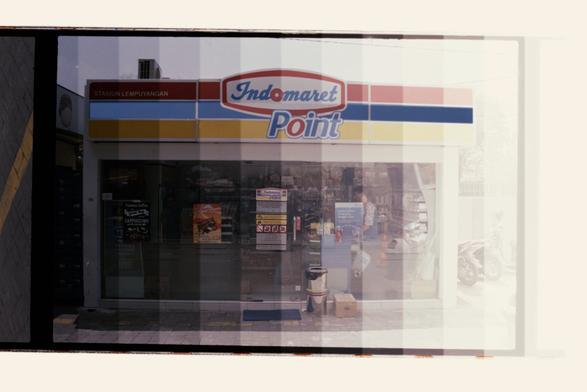

This still is 🤯 to me with respect to the “fingerprint” of spatiotemporal articulations and how we create information.

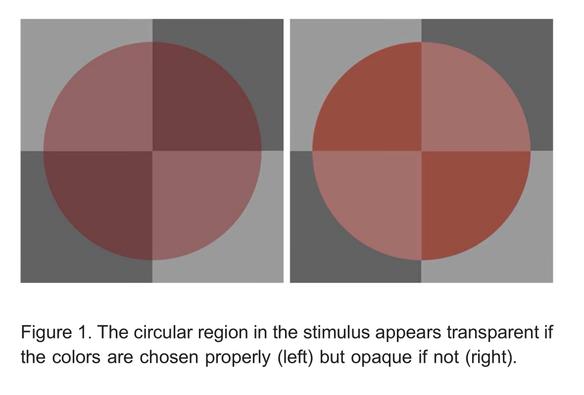

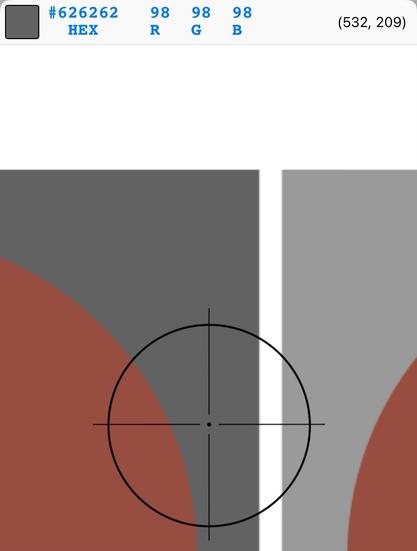

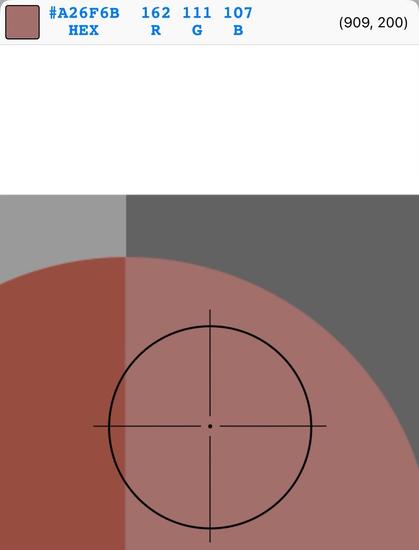

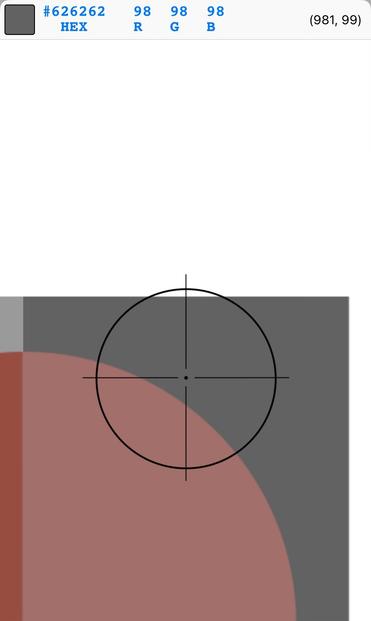

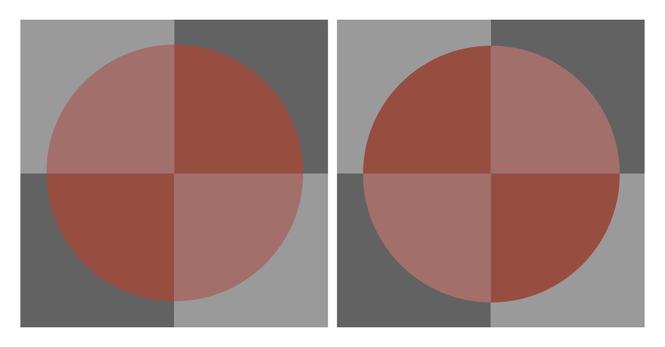

This is my riff on Ekroll and Faul’s work from 2011. Here, I chose to make the tristimuli **identical** in both depictions, with the sole difference is that the “disc”is rotated 90°.

The nature of the polarity along luminance vs chrominance triggers a strong probability of “transparency mode” in the first, while very low in the second.